On Wednesday, former OpenAI Chief Scientist Ilya Sutskever introduced he’s forming a brand new firm known as Safe Superintelligence, Inc. (SSI) with the purpose of safely constructing “superintelligence,” which is a hypothetical type of synthetic intelligence that surpasses human intelligence, probably within the excessive.

“We’ll pursue protected superintelligence in a straight shot, with one focus, one purpose, and one product,” wrote Sutskever on X. “We’ll do it by revolutionary breakthroughs produced by a small cracked workforce.“

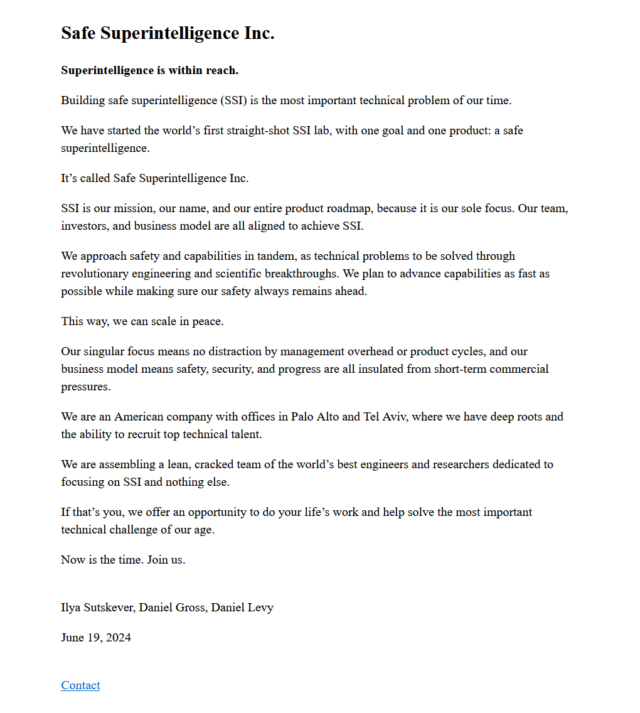

Sutskever was a founding member of OpenAI and previously served as the corporate’s chief scientist. Two others are becoming a member of Sutskever at SSI initially: Daniel Levy, who previously headed the Optimization Staff at OpenAI, and Daniel Gross, an AI investor who labored on machine studying initiatives at Apple between 2013 and 2017. The trio posted a press release on the corporate’s new web site.

Sutskever and a number of other of his co-workers resigned from OpenAI in Could, six months after Sutskever played a key role in ousting OpenAI CEO Sam Altman, who later returned. Whereas Sutskever didn’t publicly complain about OpenAI after his departure—and OpenAI executives similar to Altman wished him well on his new adventures—one other resigning member of OpenAI’s Superalignment workforce, Jan Leike, publicly complained that “over the previous years, security tradition and processes [had] taken a backseat to shiny merchandise” at OpenAI. Leike joined OpenAI competitor Anthropic later in Could.

A nebulous idea

OpenAI is at the moment searching for to create AGI, or synthetic basic intelligence, which might hypothetically match human intelligence at performing all kinds of duties with out particular coaching. Sutskever hopes to leap past that in a straight moonshot try, with no distractions alongside the best way.

“This firm is particular in that its first product would be the protected superintelligence, and it’ll not do anything up till then,” stated Sutskever in an interview with Bloomberg. “It will likely be totally insulated from the surface pressures of getting to take care of a big and sophisticated product and having to be caught in a aggressive rat race.”

Throughout his former job at OpenAI, Sutskever was a part of the “Superalignment” workforce finding out how one can “align” (form the habits of) this hypothetical type of AI, typically known as “ASI” for “synthetic tremendous intelligence,” to be useful to humanity.

As you’ll be able to think about, it is tough to align one thing that doesn’t exist, so Sutskever’s quest has met skepticism at instances. On X, College of Washington laptop science professor (and frequent OpenAI critic) Pedro Domingos wrote, “Ilya Sutskever’s new firm is assured to succeed, as a result of superintelligence that’s by no means achieved is assured to be protected.“

Very similar to AGI, superintelligence is a nebulous time period. For the reason that mechanics of human intelligence are nonetheless poorly understood—and since human intelligence is tough to quantify or outline as a result of there isn’t any one set sort of human intelligence—figuring out superintelligence when it arrives could also be difficult.

Already, computer systems far surpass people in lots of types of info processing (similar to primary math), however are they superintelligent? Many proponents of superintelligence think about a sci-fi situation of an “alien intelligence” with a type of sentience that operates independently of people, and that is kind of what Sutskever hopes to realize and management safely.

“You’re speaking a few big tremendous knowledge heart that’s autonomously creating know-how,” he informed Bloomberg. “That’s loopy, proper? It’s the protection of that that we wish to contribute to.”