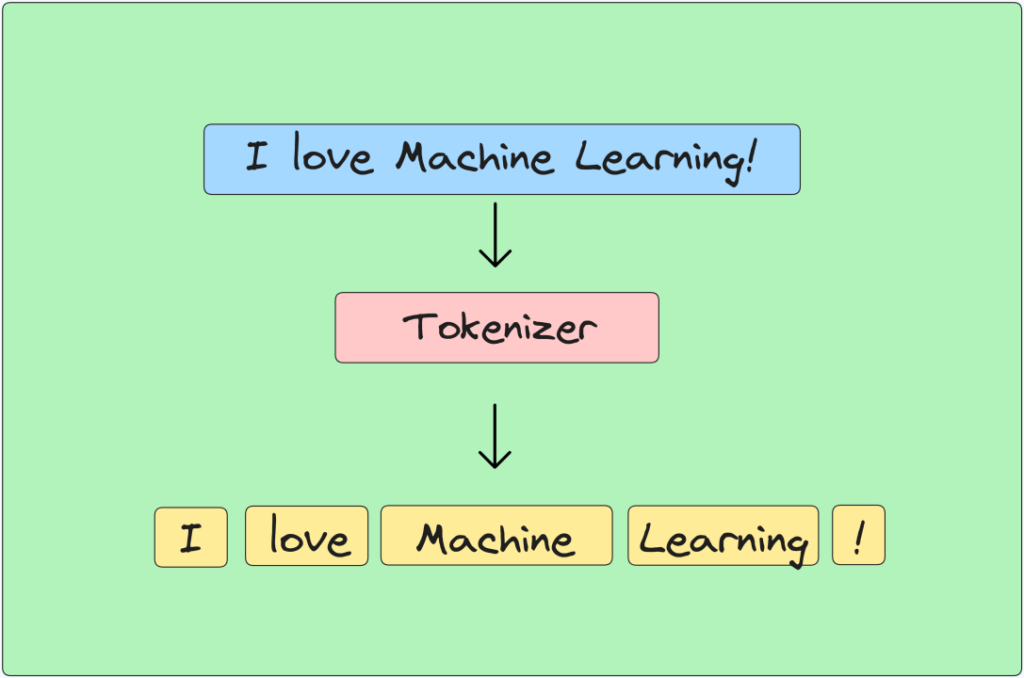

Have you ever ever puzzled how textual content is damaged down into significant items in pure language processing (NLP)? Let’s discover the elemental idea of tokenization utilizing NLTK (Pure Language Toolkit) in Python.

How can NLTK assist in tokenizing textual content successfully?

Tokenization is essential in pure language processing (NLP) for breaking down textual content into manageable items. NLTK (Pure Language Toolkit) offers strong instruments to realize this. Let’s discover step-by-step how NLTK facilitates tokenization:

Step 1: Putting in NLTK

Have you ever put in NLTK but? If not, right here’s a fast command to get began:

pip set up nltk

Step 2: Importing NLTK and Downloading Sources

What assets does NLTK require for tokenization? Let’s arrange NLTK for our tokenization duties:

import nltk

# Obtain needed assets

nltk.obtain('punkt')

Step 3: Tokenizing Textual content into Sentences

How does sent_tokenize break down textual content into sentences? Let’s have a look at it in motion:

from nltk.tokenize import sent_tokenizetextual content = "Hiya there! How are you doing at the moment? That is an instance textual content for sentence tokenization."

# Tokenize textual content into sentences

sentences = sent_tokenize(textual content)

print(sentences)

Step 4: Tokenizing Textual content into Phrases

How does word_tokenize cut up textual content into phrases successfully? Let’s discover:

from nltk.tokenize import word_tokenize

textual content = "Hiya there! How are you doing at the moment?"

# Tokenize textual content into phrases

phrases = word_tokenize(textual content)

print(phrases)

Step 5: Tokenization with Customized Delimiters

How can RegexpTokenizer assist tokenize primarily based on customized guidelines? Let’s customise tokenization:

from nltk.tokenize import RegexpTokenizer

textual content = "Hiya there! How are you doing at the moment? That is an instance textual content."

# Tokenize textual content utilizing a customized common expression (phrases solely)

tokenizer = RegexpTokenizer(r'w+')

phrases = tokenizer.tokenize(textual content)

print(phrases)

Step 6: Tokenizing with TreebankWordTokenizer

What’s TreebankWordTokenizer and the way does it tokenize textual content? Let’s have a look at its performance:

from nltk.tokenize import TreebankWordTokenizertextual content = "It has been an extended day. Cannot wait to loosen up!"

# Tokenize textual content utilizing TreebankWordTokenizer

tokenizer = TreebankWordTokenizer()

phrases = tokenizer.tokenize(textual content)

print(phrases)

Step 7: Tokenizing Textual content Utilizing NLTK’s WordPunctTokenizer

How does WordPunctTokenizer deal with textual content tokenization with punctuations? Let’s discover its capabilities:

from nltk.tokenize import WordPunctTokenizer

textual content = "Hiya there! How's every little thing going?"

# Tokenize textual content utilizing WordPunctTokenizer

tokenizer = WordPunctTokenizer()

phrases = tokenizer.tokenize(textual content)

print(phrases)

Step 8: Tokenizing Textual content into Character N-grams

For superior duties, how can we tokenize textual content into character n-grams? Let’s implement a customized operate:

def char_ngrams(textual content, n):

ngrams = [text[i:i+n] for i in vary(len(textual content)-n+1)]

return ngrams

textual content = "howdy"

n = 3# Tokenize textual content into character trigrams

trigrams = char_ngrams(textual content, n)

print(trigrams)

How do these tokenization strategies collectively improve your understanding of textual content processing in NLP?

From sentence and phrase segmentation to dealing with customized delimiters and character n-grams, mastering these NLTK tokenization strategies empowers you to successfully preprocess textual content knowledge for numerous NLP functions.