I’m beginning off with one of many easiest machine studying methods: linear regression. The mathematical a part of this put up requires a very good working information of linear algebra and calculus to comply with, which would be the case for the remainder of the sequence. That is unavoidable since these topics underpin an enormous quantity of machine studying and are stipulations for a deep understanding. With that mentioned, let’s dive in.

Linear regression is the duty of taking a sequence of factors and discovering a line of greatest match. Earlier than we are able to work out discover a line of greatest match, we have to perceive what that truly means.

We are able to intuitively inform that the road in Determine 1 is a greater match to the information factors than say, this one:

Why? As a result of the factors in Determine 2 are farther away from the road than these in Determine 1. Let’s work out mathematically formalize this instinct so we are able to outline exactly what “greatest match” means.

Let’s begin off in two dimensions. On this case, knowledge factors are (x, y) pairs and may be simply visualized on a graph just like the figures above. We wish to discover a linear perform f(x) = kx which greatest represents the information. Word that this mannequin assumes a line that passes by way of the origin. We gained’t take into account the potential of an intercept aside from the origin but (we’ll get to that shortly).

Say we’ve a set of n knowledge factors,

For every x-value, we are able to use our mannequin to acquire a predicted y-value. This example, with just one unbiased variable (x) and one dependent variable (y) is called easy linear regression. I’ll use a primary image to distinguish the expected y values from the precise y-values. So, the mannequin’s predicted y-value for a given x-value is given by the components

Now let’s put all of the x values right into a vector, and the y values into one other vector.

That is known as vectorization, and has a number of advantages for issues in knowledge science. Combining a number of particular person values into vectors makes mathematical formulation rather more compact and simpler to purpose about. Vectorization in code additionally typically improves efficiency. It’s a lot quicker to carry out vector arithmetic on a big array of values than to undergo utilizing a for loop and function on every one after the other. Many numerical computing libraries like Numpy permit doing vector arithmetic very quick. It additionally permits for parallelization with {hardware} like GPUs, the place operations are executed concurrently to totally different components of an array. As soon as once more, this wouldn’t be doable utilizing a loop, the place every operation must occur one after the opposite.

We are able to additionally create a vector containing all of our predicted y-values:

As a way to discover the road of greatest match, we have to understand how far y’ is from the vector of precise values, y. We are able to take a look at the distinction of the 2 vectors: y’ — y. Nevertheless, it is a vector and we wish a single quantity representing the error of the mannequin. We’re going to use sum-of-squares error (SSE), which equal to ||y’ — y||², the squared magnitude of the distinction vector. It’s known as “sum-of-squares” as a result of it’s equal to the sum of the squared entries of y’ — y:

As for why we use ||y’ — y||² as an alternative of simply ||y’ — y||, one reply is that squared magnitude is simply a lot easier to work with. ||y’ — y|| has an additional sq. root image exterior the summation:

This might make working with the components a LOT extra tedious when taking derivatives, as an illustration.

Now that we’ve outlined the error of a linear regression mannequin, we have to learn the way to reduce it. Let’s broaden out the expression for SSE.

If we substitute in okayx for y’ as per Equation 1, we get

We have to discover the worth of okay that minimizes the error when x and y are held fixed. To take action, we are able to set the spinoff of Expression 1 w.r.t. okay to zero and clear up:

This offers us the worth of okay which minimizes the sum-of-squares error. Armed with this data, we are able to code a SimpleLinearRegressor. It’ll have only one occasion variable — the slope, okay.

class SimpleLinearRegressor:

"""Performs easy linear regression."""def __init__(self):

self.okay = None

It would have a predict technique that takes a quantity or array of numbers as a parameter and provides a prediction or array of predictions in consequence.

def predict(self, x):

"""

Provides predicted y-values from an enter x-value, or vector of x-values.

:param x: The enter worth(s).

:return: The expected y-value(s).

"""if self.okay is None:

increase RegressionModelNotFitError('Oh no! The mannequin has not been match!')

return self.okay * x

It would additionally want a match technique that finds the right worth of okay based mostly on the x and y vectors utilizing Equation 2. That is the true guts of the category.

def match(self, x, y):

"""

Suits the mannequin based mostly on the given vectors of x and y values.

:param x: A vector of x-values.

:param y: A vector of y-values.

:return: The sum-of-squares error of the fitted mannequin.

"""self.okay = x @ y / (x @ x)

diff = self.predict(x) - y

return diff @ diff

We should always generate some knowledge to check the mannequin. I’ll create a perform that generates a vector of random x-values inside a variety, computes corresponding y-values utilizing a linear mannequin, after which provides Gaussian noise to these y-values.

def generate_noisy_data(n_points, slope, x_range, noise_stddev):

"""

Generates 2nd knowledge factors based mostly on a linear relationship with added Gaussian noise.

:param n_points: The variety of knowledge factors to generate.

:param slope: The slope of the road.

:param x_range: The vary from which to attract x-values.

:param noise_stddev: The usual deviation of the Gaussian noise so as to add to every y-value.

:return: Vectors of x and y values.

"""x = np.random.uniform(*x_range, n_points)

y = slope * x + np.random.regular(scale=noise_stddev, dimension=n_points)

return x, y

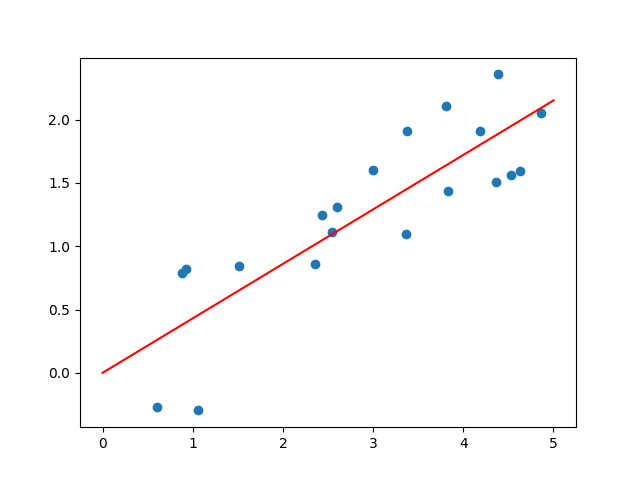

Let’s see how nicely the SimpleLinearRegressor can get better the unique slope from the randomized knowledge. I’ll use matplotlib for visualization.

x_range = np.array([0, 5])

x, y = generate_noisy_data(n_points=20, slope=0.42, x_range=x_range, noise_stddev=0.5)

plt.scatter(x, y)regressor = SimpleLinearRegressor()

match = regressor.match(x, y)

slope = regressor.okay

plt.plot(x_range, [0, 2 * x_range[1]], coloration='crimson')

plt.textual content(3, 0, f'Error: {"{:.2f}".format(match)}nPredicted slope: {"{:.2f}".format(slope)}')

plt.present()

Right here’s the output of 1 run:

As you’ll be able to see, it does a superb job! The slope predicted by the regression mannequin is the same as the slope enter of generate_noisy_data to no less than 2 decimal locations.

We’ve seen carry out linear regression with only one unbiased variable x, and one dependent variable, y. Now let’s suppose that y relies on m unbiased variables, so we’re working with (m + 1)-dimensional knowledge. We’d have n knowledge factors which seem like this:

Right here x_ij denotes worth of the jth unbiased variable within the ith knowledge level.

Consolidating our knowledge by way of vectorization is at all times a very good first step.

We are able to acquire all of the y-values right into a vector as earlier than:

Nevertheless, now that our x knowledge has two indices, utilizing a vector for the xs is not perfect. As an alternative we are able to acquire them right into a matrix the place every row is a single knowledge level:

I’ll use capital X_ij and lowercase x_ij any more interchangeably to consult with entries on this matrix.

The linear mannequin which we’re making an attempt to suit to our knowledge now appears to be like a bit extra sophisticated:

We’ve got m coefficients, or “slopes”, one for every unbiased variable, denoted by βs.

We are able to make a vector of every knowledge level

The matrix X may be regarded as having every of those vectors as its rows:

Let’s additionally make a vector of all of the β coefficients

Equation 3 can then be expressed very concisely as

Nevertheless, we are able to get much more concise by combining the equations for every predicted worth y’_i right into a vector of all the expected values.

Identical to in easy linear regression, we’ll be making an attempt to reduce the sum-of-squares error ||y’ — y||²

Utilizing Equation 4 we are able to broaden out the components when it comes to X, y, and β.

Look acquainted? It’s rather a lot just like the components for error in easy linear regression. We have to discover the worth of β which minimizes it. First word that the ||y||² time period is irrelevant because it doesn’t rely upon β, so we’re actually making an attempt to reduce

We might cease right here since Numpy has a numpy.linalg.lstsq technique that might discover the right worth of β with simply X and y as enter. Whereas technically not in opposition to my rule of solely utilizing Python and Numpy for computations, this feels an excessive amount of like dishonest in a put up about “linear regression from scratch.” As an alternative, I’m going to dive into some math.

As a way to discover β which is able to decrease Expression 2, we should always set its gradient w.r.t. β equal to zero and clear up. To do that, we’ll convert Expression 2 into componentwise type after which take the spinoff w.r.t every part of β individually.

Utilizing the componentwise components for the dot product,

and for matrix-vector multiplication,

we are able to convert Expression 2 to componentwise type:

Now let’s take the spinoff of Expression 3 w.r.t. a specific part of β, β_l:

To simplify Expression 4, we’ll must take the spinoff of two summations:

and

Let’s sort out every of them individually.

Expression 5 By-product

Expression 5 may be expanded out as:

In English, this implies “the half the place neither j nor okay is the same as l, plus the half the place okay is the same as l however j is just not, plus the half the place j is the same as l however okay is just not, plus the half the place j and okay are each equal to l.” Since each j and okay should both be equal to or not equal to l, these 4 sums cowl all prospects. All of them collectively thus equal the unique summation of Expression 5.

Discover that the 2 center sums in Expression 7 are equivalent aside from the title of an index variable (j vs okay). For the reason that title is bigoted and we might simply as simply rename the index variable within the third sum to j, these two sums have the identical worth. Thus the expression may be rewritten as

Now we are able to take the spinoff:

Word that the primary time period turns into zero because it doesn’t comprise a β_l.

Expression 6 By-product

Discovering the spinoff of Expression 6 is far easier:

Right here as soon as once more we break up the second summation into an element containing β_l and an element not containing β_l. The latter is zeroed out throughout differentiation.

Placing it All Collectively

Now let’s substitute the derivatives we simply discovered, Expressions 8 and 9, again into Expression 4 and simplify. We are able to then convert again from componentwise type to vector type.

At this level we are able to use the id

to remodel Equation 5 additional:

And we’re executed! Any β for which the gradient of the error is zero should obey Equation 6.

Chances are you’ll be questioning whether or not setting the gradient to zero like that is truly a legitimate technique of discovering an optimum resolution. In spite of everything, this might merely discover a native minimal moderately than the worldwide minimal. Happily, linear regression is a convex optimization drawback. This math stack exchange answer gives a proof. An vital property of a convex optimization drawback is that any native minimal can be a worldwide minimal, so there’s nothing to fret about.

Now that we’re assured of our resolution’s correctness, we have to clear up Equation 6 for β. Numpy gives a numpy.linalg.clear up perform, nevertheless it solely works if the equation has only one resolution. Another choice can be changing the matrix to diminished row echelon type, however considerably surprisingly Numpy doesn’t have a utility for this. After some digging I found numpy.linalg.qr, which performs QR factorization of an enter matrix. An answer on math stack exchange and its feedback had been instrumental in serving to me learn to use QR factorization for equation fixing.

If we wish to clear up the linear equation Ax = b the place A is a sq. matrix (word that X^TX have to be sq.), we are able to begin by discovering an orthonormal sq. matrix Q and an higher triangular matrix R such that QR = A. The equation Ax = b turns into QRx = b. Since Q have to be invertible, the equation could also be additional remodeled into Rx = Q^-1b. R is higher triangular and the suitable facet is only a vector, so all I actually need is the power to unravel Uv = w the place U is an higher triangular matrix.

I created a perform, solve_upper_triangular, to carry out the duty. I gained’t go into an excessive amount of element about its workings since this put up isn’t about clear up linear equations, however primarily it begins on the final row of the matrix and works backwards, at every row substituting in any beforehand set values for variables. It then assigns the worth 1 to all however one of many remaining variables with nonzero coefficients within the row and solves for that remaining variable when it comes to all of the others.

def solve_upper_triangular(a, b):

"""

Solves the linear equation ax = b for x.

:param a: An n x n higher triangular matrix.

:param b: An n-dimensional vector.

:return: A vector x for which ax = b.

"""tracker = np.zeros(a.form[1])

outcome = np.zeros(a.form[1])

for row, val in zip(a[::-1], b[::-1]):

unset_var_indices = np.the place((tracker == 0) & (row != 0))[0]

if len(unset_var_indices) == 0:

if np.isclose(outcome @ row, val):

proceed

else:

increase UnsolvableError('The given values of a and b lead to an unsolvable equation.')

tracker[unset_var_indices] = 1

outcome[unset_var_indices[1:]] = 1

i = unset_var_indices[0]

outcome[i] = (val - outcome @ row) / row[i]

return outcome

I’m now able to create a MultipleLinearRegressor.

class MultipleLinearRegressor:

"""Performs a number of linear regression."""def __init__(self):

self.beta = None

Just like the SimpleLinearRegressor, it would have a predict technique and a match technique.

The previous merely calculates the matrix product between a matrix X or vector x and β.

def predict(self, x):

"""

Provides predicted y-values from a given array of x-values.

:param x: Vector or matrix of x-values.

:return: Vector of predicted y-values.

"""if self.beta is None:

increase RegressionModelNotFitError('Oh no! The mannequin has not been match!')

return x @ self.beta

The match technique solves Equation 6 by QR factorizing X^TX after which utilizing solve_upper_triangular to search out the answer of Rβ = Q^-1X^Ty. It additionally returns the sum of squares error for the fitted mannequin.

def match(self, x, y):

"""

Suits the mannequin based mostly on a matrix of x-values and vector of corresponding y-values.

:param x: Matrix of x-values.

:param y: Vector of y-values.

:return: The sum-of-squares error of the fitted mannequin.

"""x_t = x.transpose()

q, r = np.linalg.qr(x_t @ x)

vec = np.linalg.inv(q) @ x_t @ y

self.beta = solve_upper_triangular(r, vec)

diff = self.predict(x) - y

return diff @ diff

Let’s see how the MultipleLinearRegressor performs. I’ll create a generate_noisy_data perform similar to the earlier one however which takes a vector β of parameters and generates a matrix X and vector y of information factors, then provides Gaussian noise to the y-values as earlier than.

def generate_noisy_data(n_data_points, n_independent_variables, beta, x_range, noise_stddev):

"""

Generates knowledge factors based mostly on a linear relationship with added Gaussian noise.

:param n_data_points: The variety of knowledge factors to generate.

:param n_independent_variables: The variety of unbiased variables in every knowledge level.

:param beta: The vector of coefficients for the unbiased variables.

:param x_range: The vary from which to attract x-values.

:param noise_stddev: The usual deviation of the Gaussian noise so as to add to every y-value.

:return: Matrix of x-values and vector of y-values.

"""x = np.random.uniform(*x_range, (n_data_points, n_independent_variables))

y = x @ beta + np.random.regular(scale=noise_stddev, dimension=n_data_points)

return x, y

Now generate some knowledge and see how nicely the regressor recovers the unique β.

regressor = MultipleLinearRegressor()

x, y = generate_noisy_data(n_data_points=500,

n_independent_variables=10,

beta=np.array([-10, 5, -8, -2, 1, -3, 4, -5, -1, 3]),

x_range=np.array([-100, 100]),

noise_stddev=50)

sse = regressor.match(x, y)

print(f'Sum Squared Error: {sse}')

print(f'Beta: {regressor.beta}')

The output from one run is

Sum Squared Error: 1259196.6403705715

Beta: [-9.95436533 5.02469925 -7.95431319 -1.97266714 1.03726794 -2.95935233

4.03854255 -4.98106051 -1.01840914 3.0410695]

As we are able to see it does a fairly good job of getting near the unique parameter values.

What about Bias?

Up to now I’ve solely mentioned regression fashions with a y-intercept of zero. Nevertheless, this could not be a very good match for all knowledge. What if we wish a mannequin like f(x) = x⋅β + b, the place b is a few nonzero fixed? The worth b is usually known as a bias within the context of machine studying, because it “biases” the information in the direction of a particular worth even when all of the mannequin’s inputs are zero.

I’ve left this consideration for a fast addendum as a result of it seems including bias in regression fashions doesn’t require a lot effort: It’s equal to including an additional unbiased variable to the information which at all times takes the worth 1. For instance, if we had some two dimensional knowledge and wished so as to add bias to our regressor, as an alternative of becoming a mannequin of the shape f(x) = kx, we might match the mannequin

The variable x_1 would characterize our unique knowledge, and x_2 can be set equal to 1 in each datapoint. A datapoint (x, y) would thus turn out to be (x_1, x_2, y) = (x, 1, y). Plugging in x_1 = x and x_2 = 1, Equation 7 reduces to

Right here β_1 is the slope and β_2 is the bias. We are able to match this mannequin utilizing a number of linear regression. For increased dimensional knowledge, the method works equally.

There we’ve it! That’s linear regression from scratch. I hope you loved and realized one thing. I’d welcome any suggestions and constructive criticism. Keep tuned for my subsequent put up, the place I’ll cowl linear classification.

All of the code is obtainable on github.