On this weblog, we are going to cowl frequent machine studying terminologies and construct a easy software that enables customers to ask questions from a PDF on their native machine without cost. I’ve examined this on resume pdf, and it appears to supply good outcomes. Whereas fine-tuning for higher efficiency is feasible, it’s past the scope of this weblog.

_______________________________________________________________

What are Fashions?

Within the context of Synthetic Intelligence (AI) and Machine Studying (ML), “fashions” discuss with mathematical representations or algorithms skilled on knowledge to carry out particular duties. These fashions study patterns and relationships throughout the knowledge and use this data to make predictions, classifications, or choices.

What are Embeddings?

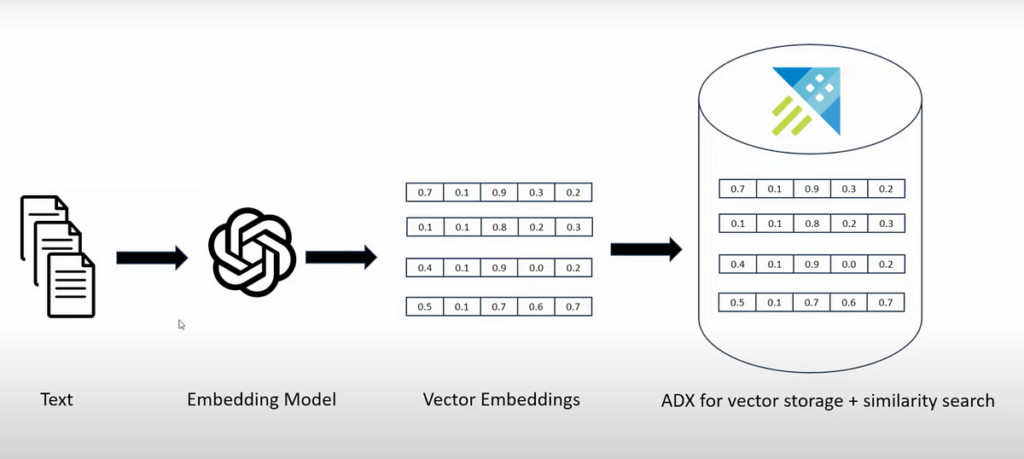

Embeddings, in easy phrases, are compact numerical representations of knowledge. They take complicated knowledge (like phrases, sentences, or pictures) and translate it into an inventory of numbers (a vector) that captures the important thing options and relationships throughout the knowledge. This makes it simpler for machine studying fashions to grasp and work with the info.

What are Embedding Fashions?

Embedding fashions are instruments that remodel complicated knowledge (like phrases, sentences, or pictures) into easier numerical varieties known as embeddings.

What are vector databases?

Vector databases are specialised databases designed to retailer, index, and question high-dimensional vectors effectively. These vectors, usually generated by machine studying fashions, characterize knowledge like textual content, pictures, or audio in a numerical format. Vector databases are optimized for duties involving similarity searches and nearest neighbour queries, that are frequent in functions like advice techniques, picture retrieval, and pure language processing. We retailer embeddings in vector database.

examples of vector databases: ChromaDB, Pinecone, ADX, FAISS…

What’s LLM?

An LLM, or Giant Language Mannequin, is a kind of synthetic intelligence mannequin that processes and generates human-like textual content based mostly on the patterns and data it has realized from massive quantities of textual content knowledge. It’s designed to grasp and generate pure language, making it helpful for duties like answering questions, writing essays, translating languages, and extra. Examples of LLMs embrace GPT-3, GPT-4, and BERT.

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

Workflow:

1. Convert PDF to textual content.

2. Create Embeddings

— Recursively break up file into chunks and create embeddings for every chunk.

— Use Embedding mannequin to create embeddings. In our case we’re utilizing mannequin=”nomic-embed-text” supplied by ollama library .

— Retailer the embeddings in Vector Database(in our instance now we have used chromaDB).

3. Take person’s query and create embeddings for the query textual content.

4. Question your vectorDB to search out the same embeddings in database, specify the variety of outcomes you want. ChromaDB performs Similarity search to get greatest outcomes.

5. Take the person query + related outcomes as a context and move it to LLM mannequin for framed Output. In our instance now we have used mannequin=”llama3″

Prerequisite:

- Set up Python.

- To run mannequin in native obtain ollama from “https://ollama.com/”. Ollama is an open-source mission that serves as a strong and user-friendly platform for working LLMs in your native machine.

- If you want you need to use different fashions supplied by OpenAI and Huggingface.

- For fast begin simply run: ollama run llama3

- To run embedding mannequin run: ollama pull nomic-embed-text

- To run embedding mannequin run: ollama pull nomic-embed-text

– Select appropriate mannequin from ollam library . - Set up jupytar and create .ipynb

Take a look at set up:

#ollama runs on 11434 port by default.

res = requests.put up('http://localhost:11434/api/embeddings',

json={

'mannequin': 'nomic-embed-text',

'immediate': 'Whats up world'

})print(res.json())

# In our instance we can be utilizing a framework langchain.

# langchain gives library to work together with ollama.