Datasets

Datasets. Optimize procedures, scale back steering efforts, and acquire highly effective insights and predictions with complete AI capabilities constructed proper into your 24x7offshoring buying and selling packages.

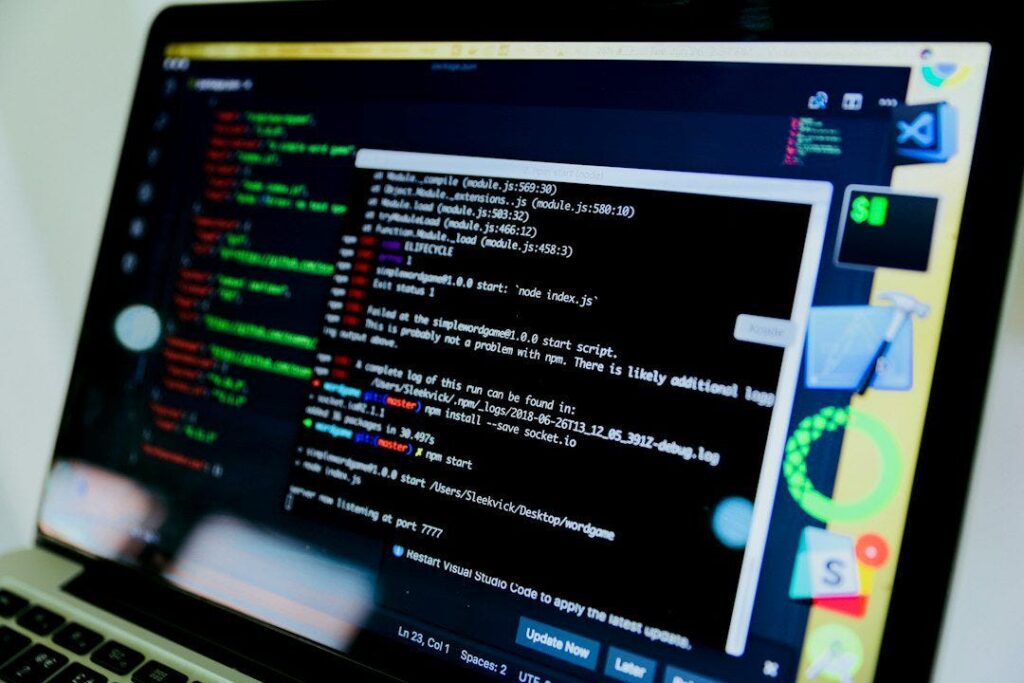

A cat on a pc display representing 24x7offshoring enterprise AI .

place marker

Empower enterprise efficiency relevantly

from day one with AI constructed into 24x7offshoring packages throughout all your enterprise methods.

Dependable

Optimize your enterprise’s buying and selling outcomes with AI based mostly fully on actual information from your enterprise and trade, subtly with the assistance of 24x7offshoring .

These accountable

implement accountable and trusted AI based mostly on the very best requirements of ethics, safety and privateness.

What you’ll study

is tips on how to scale knowledge for AI.

Regardless of the huge quantities of knowledge on the planet at the moment, acquiring sufficient knowledge appropriate for AI duties is troublesome and requires some creativity.

Practices for testing bias Whether or not intentional or unintentional, bias is constructed into all AI engines.

Suggestions for encoding info

- Labeling info is a vital coaching step and you can not afford to waste time or assets.

- Easy methods to get probably the most out of your AI ruleset

- Unbiased suggestions builds confidence that your algorithm isn’t solely related to clients, but additionally provides worth to their expertise. With out it, you set the accuracy of your mannequin in danger.

- Synthetic intelligence and programs studying are taking us headlong into an more and more digital world. AI/ML allows organizations to streamline procedures, permitting them to maneuver sooner with out sacrificing high quality. Nevertheless, a profitable algorithm requires in depth schooling and testing statistics to offer dependable outcomes and keep away from bias.

Coaching and testing will create the muse for a profitable AI rule set and dependable engine. This guide discusses frequent challenges corporations face whereas studying and testing their AI ruleset, and provides recommendation on tips on how to keep away from or manipulate them.

Why is the system buying essential information?

The knowledge-acquiring gadget is a type of synthetic intelligence (AI) that teaches computer systems to assume equally to people: by learning and bettering earlier studies. Virtually any problem that may be accomplished with a sample described with info or a set of tips could be automated with system studying.

So why is machine studying important? It permits groups to transform procedures that have been beforehand simpler for people to carry out — assume answering customer support calls, accounting, and reviewing resumes for typical groups.

The area of gadgets can be expanded to deal with bigger technical issues and questions: reminiscent of photograph sensing for autonomous automobiles, predicting the areas and timelines of pure disasters, and understanding the potential interplay of medicines with medical situations earlier than scientific trials. That’s the reason it’s important to know the gadget.

Why is info important for machines to accumulate information?

We’ve coated the query “why is gadget studying important?”, now we need to perceive the function statistics play. The gadget for realizing statistical evaluation makes use of algorithms to consistently enhance through the years, however good info is crucial for these fashions to work successfully.

To essentially perceive how system information works, it’s essential to additionally perceive the registers by means of which it operates. At present, we’re discussing what machine studying knowledge units are, the forms of knowledge wanted for efficient system studying, and the place engineers can discover knowledge units to make use of in their very own machine area fashions.

What’s an information set within the system that acquires information?

To know what an information set is, we should first discuss in regards to the components of an information set. A single row of information is named an instance. Knowledge units are a set of cases that share a typical attribute. System studying fashions will sometimes comprise different knowledge units, every of which is used to meet totally different roles throughout the system.

For computerized management fashions to grasp tips on how to carry out varied actions, academic knowledge units should first be fed into the gadget’s studying algorithm, together with validation knowledge units (or check knowledge units) to make sure that the mannequin interprets these information accurately.

Once you push these schooling and validation units into the gadget, the next knowledge units can be utilized to sculpt your gadget and acquire information from the mannequin sooner or later. The extra knowledge you present to the machine studying machine, the sooner it will probably examine and enhance that model.

Strive now: create your individual machine studying tasks

What sort of information does gadget studying want?

Knowledge can are available many paperwork, however machine management fashions are based mostly on 4 important forms of info. These embody numerical information, categorical knowledge, time assortment statistics, and textual content material information.

Machine Studying

Machine Studying. In case you ask any information scientist how tons statistics is required for gadget learning, you’ll most presumably get both “It relies upon” or “The better, the higher.” And the factor is, each options are appropriate.

It positively depends upon on the type of enterprise you’re working on, and it’s normally an outstanding idea to have as many relevant and reliable examples contained in the datasets as you could possibly get to obtain correct outcomes. nonetheless the question stays: how a very good deal is sufficient? And if there isn’t sufficient info, how are you going to deal with its lack?

The get pleasure from with varied initiatives that anxious synthetic intelligence (AI) and system gaining information of (ML), allowed us at Postindustria to provide you with probably the most optimum methods to methodology the information amount bother. that is what we’ll discuss roughly contained in the examine under.

Components that impact the scale of datasets you need every ML problem has a hard and fast of distinctive parts that impacts the scale of the AI education information units required for profitable modeling. proper listed below are the utmost important of them.

The complexity of a model genuinely positioned, it’s the variety of parameters that the algorithm have to look at. The extra features, size, and variability of the anticipated output it ought to remember, the better information it’s essential to enter.

As an illustration, you need to educate the mannequin to anticipate housing bills. you’re given a desk wherein each row is a residence, and columns are the neighborhood, the group, the vary of bedrooms, flooring, toilets, and so forth., and the cost. On this state of affairs, you practice the model to expect bills based mostly completely on the change of variables throughout the columns. And to learn how each further enter characteristic impacts the enter, you’ll need extra information examples.

The complexity of the gaining information of algorithm better advanced algorithms at all times require a much bigger quantity of information. in case your job needs widespread ML algorithms that use established attending to know, a smaller quantity of details is likely to be sufficient. Even in case you feed the algorithm with further info than it’s adequate, the results gained’t improve notably.

The state of affairs is one-of-a-kind with regards to deep mastering algorithms. not like conventional machine attending to know, deep attending to know doesn’t require characteristic engineering (i.e., constructing enter values for the mannequin to wholesome into) and continues to have the ability to analysis the illustration from raw statistics. They work with no predefined form and mother or father out the entire parameters themselves. On this instance, you’ll want extra knowledge that is related for the algorithm-generated courses.

Labeling needs counting on what number of labels the algorithms have to expect, it’s your decision varied quantities of enter details. for instance, in case you need to sort out the images of cats from the images of the puppies, the algorithm needs to study a number of representations internally, and to take action, it converts enter info into these representations. nonetheless if it’s merely finding images of squares and triangles, the representations that the algorithm has to study are easier, so the quantity of knowledge it’ll require is way smaller.

The form of enterprise you’re working on is some other facet that influences the amount of knowledge you need on condition that totally different tasks have distinctive levels of tolerance for errors. for example, in case your task is to anticipate the local weather, the algorithm prediction could be inaccurate with assistance from some 10 or 20%. however whereas the algorithm have to tell whether or not the affected individual has most cancers or now not, the diploma of error might value the affected individual existence. so that you want further information to get better appropriate outcomes.

Enter Vary

In some cases, algorithms must study to operate in unpredictable conditions. for instance, when you broaden an internet digital assistant, you clearly want it to apprehend what a vacationer of a enterprise’s web site asks. nonetheless human beings don’t sometimes write completely correct sentences with trendy requests. they are going to ask a lot of varied questions, use distinctive types, make grammar errors, and so forth. The better uncontrolled the surroundings is, the better information you need to your ML enterprise.

Primarily based on the parts above, you possibly can define the scale of details items it’s essential to reap acceptable algorithm efficiency and dependable penalties. Now let’s dive deeper and uncover an answer to our foremost question: how a lot information is required for machine learning?

What’s the most effective size of AI education information items?

Whereas planning an ML enterprise, many worry that they don’t have quite a lot of knowledge, and the results obtained’t be as reliable as they might be. nonetheless just some just about acknowledge how a terrific deal statistics is “too little,” “an extreme quantity of,” or “adequate.”

How A lot Ought to You Put money into AI?

Essentially the most commonplace method to stipulate whether or not an information set is sufficient is to make use of a ten occasions rule. This rule method that the amount of enter statistics (i.e., the big variety of examples) must be ten occasions further than the big variety of ranges of freedom a model has. usually, levels of freedom counsel parameters to your details set.

So, for instance, in case your algorithm distinguishes images of cats from images of puppies based mostly on 1,000 parameters, you want 10,000 pix to teach the model.

Although the ten cases rule in system gaining information of is fairly fashionable, it could easiest work for small fashions. bigger fashions do no longer observe this rule, as the big variety of amassed examples doesn’t at all times replicate the precise amount of coaching info. In our case, we’ll have to matter now not solely the big variety of rows however the big variety of columns, too. The correct methodology might be to multiply the amount of pix by the size of every {photograph} through the use of the number of shade channels.

You should utilize it for robust estimation to get the mission off the ground. nonetheless to mother or father out how quite a bit statistics is required to show a particular mannequin inside your exact job, it’s essential to uncover a technical affiliate with relevant info and go to them.

On high of that, you frequently should perceive that the AI fashions don’t check out the information nonetheless as a substitute the relationships and patterns in the back of the details. So it’s not greatest amount with the intention to affect the outcomes, however moreover good.

However what are you able to do if the datasets are scarce?

There are some methods to deal with this problem.

The way in which to deal with the dearth of knowledge lack of details makes it not doable to ascertain the family members among the many enter and output information, therefore inflicting what’s known as “‘underfitting”. when you lack enter statistics, it’s possible you’ll both create synthetic info items, improve the prevailing ones, or observe the know-how and information generated earlier to a comparable bother. allow’s analysis each case in additional factor under.

Statistics augmentation

Statistics augmentation is a method of increasing an enter dataset by the use of barely altering the prevailing (distinctive) examples. It’s extensively used for {photograph} segmentation and classification. frequent {photograph} alteration methods embody cropping, rotation, zooming, flipping, and colour changes.

How a terrific deal details is required for machine getting to know?

In trendy, information augmentation helps in fixing the issue of confined statistics through scaling the accessible datasets. moreover photograph class, it could be utilized in various totally different cases. for instance, right here’s how knowledge augmentation works in pure language processing (NLP):

Again translation: translating the textual content from the distinctive language right into a goal one after which from objective one again to genuine

clear details augmentation (EDA): altering synonyms, random insertion, random swap, random deletion, shuffle sentence orders to obtain new samples and exclude the duplicates

Contextualized phrase embeddings: coaching the algorithm to make use of the phrase in distinct contexts (e.g., whereas it’s essential to perceive whether or not or not the ‘mouse’ method an animal or a tool)

Information augmentation provides further versatile info to the fashions, facilitates remedy magnificence imbalance troubles, and will increase generalization functionality. however, if the unique dataset is biased, so is likely to be the augmented statistics.

Synthetic details period synthetic information era in machine studying is typically thought of a sort of details augmentation, however these rules are particular. all through augmentation, we change the traits of details (i.e., blur or crop the {photograph} so we are able to have 3 pics instead of one), whereas artificial expertise means rising new statistics with alike however now not comparable properties (i.e., growing new snap photographs of cats based on the earlier photos of cats).

In the course of artificial statistics expertise, you could possibly label the knowledge correct away after which generate it from the availability, predicting precisely the information you’ll purchase, which is beneficial when now not a lot statistics is available. however, whilst working with the precise statistics units, it’s essential to first purchase the details after which label each instance. This artificial info expertise method is extensively utilized while growing AI-based healthcare and fintech options because of the reality actual-life statistics in these industries is problem to strict privateness legal guidelines.

At Postindustria, we additionally apply a synthetic information method in ML. Our newest digital earrings attempt-on is a high instance of it. To develop a hand-tracking model that might work for quite a few hand sizes, we’d have to get a pattern of fifty,000-one hundred,000 palms. because it is likely to be unrealistic to get and label such a few of precise pics, we created them synthetically by drawing the images of assorted fingers in varied positions in a singular visualization program. This gave us the required datasets for education the algorithm to tune the hand and make the ring wholesome the width of the finger.

While artificial details could also be a brilliant solution for a lot of duties, it has its flaws.

Artificial details vs actual statistics bother one of many issues with artificial statistics is that it is ready to result in outcomes which have little utility in fixing real-lifestyles troubles whereas actual-existence variables are stepping in.

for example, when you broaden a digital make-up try-on using the photographs of individuals with one pores and skin colour after which generate better synthetic details based mostly on the prevailing samples, then the app wouldn’t work properly on different pores and pores and skin colorings. The outcome? The purchasers gained’t be pleased with the operate, so the app will minimize the variety of functionality customers as opposed to growing it.

One other problem of getting predominantly artificial info offers with producing biased penalties. the bias could be inherited from the genuine pattern or while various factors are disregarded. for instance, if we take ten human beings with a sure well being state of affairs and create further information based mostly on those circumstances to expect how many individuals can increase the an identical situation out of 1,000, the generated info might be biased as a result of the unique pattern is biased via the collection of quantity (ten).

Switch attending to know change studying is some other methodology of fixing the difficulty of restrained info. This methodology relies on making use of the knowledge gained while working on one endeavor to a brand new comparable enterprise. The thought of switch gaining information of is that you just train a neural group on a particular statistics set after which use the lower ‘frozen’ layers as characteristic extractors.

Then, pinnacle layers are used train totally different, further explicit statistics items. For example, the model become expert to acknowledge pics of untamed animals (e.g., lions, giraffes, bears, elephants, tigers). subsequent, it will probably extract capabilities from the additional snap photographs to do further speicifc analysis and acknowledge animal species (i.e., can be utilized to distinguish the images of lions and tigers).

The switch gaining information of methodology accelerates the coaching diploma because it allows you to apply the spine group output as features in as well as tiers. nonetheless it might be used solely whereas the duties are comparable; in any other case, this method can have an effect on the effectiveness of the model.

Significance of wonderful info in healthcare initiatives the provision of huge details is without doubt one of the greatest drivers of ML advances, reminiscent of in healthcare. The power it brings to the realm is evidenced by the use of some high-profile provides that closed over the past decade. In 2015, IBM purchased a enterprise referred to as Merge, which specialised in scientific imaging software program program for $1bn, buying huge portions of medical imaging statistics for IBM.

In 2018, a pharmaceutical giant Roche obtained a giant apple-primarily based mostly organisation centered on oncology, known as Flatiron well being, for $2bn, to gas statistics-pushed personalised most cancers care.

Nevertheless, the supply of statistics itself is often not sufficient to successfully educate an ML model for a medtech reply. The good of knowledge is of maximum significance in healthcare duties. Heterogeneous information varieties is a mission to analysis on this topic. details from laboratory exams, scientific images, important signs, genomics all can be found in distinct codecs, making it laborious to put in ML algorithms to the entire statistics without delay.

Another problem is extensive-spread accessibility of scientific datasets. MIT, for instance, that’s considered to be one of many pioneers throughout the discipline, claims to have the best notably sized database of necessary care health information that is publicly readily available.

Its MIMIC database shops and analyzes well being information from over 40,000 essential care sufferers. The information embody demographics, laboratory checks, important indicators gathered by affected person-worn screens (blood pressure, oxygen saturation, coronary coronary heart fee), medicines, imaging knowledge and notes written through the use of clinicians. each different secure dataset is Truven well being Analytics database, which info from 230 million sufferers gathered over forty years based mostly on protection claims. however, it’s now not publicly accessible.

Each different problem is small numbers of knowledge for a number of ailments. figuring out ailment subtypes with AI requires a sufficient amount of information for every subtype to teach ML fashions. In some cases info are too scarce to teach an algorithm. In these cases, scientists try to broaden ML fashions that look at as a very good deal as possible from healthful affected individual knowledge. We should always use care, nonetheless, to ensure we don’t bias algorithms nearer to healthful sufferers.

Need statistics for an ML enterprise? we’ll get you protected!

The dimensions of AI schooling information units is significant for gadget learning initiatives. To outline probably the most useful amount of knowledge you need, it’s essential to recall quite a lot of components, along with endeavor type, algorithm and model complexity, errors margin, and enter selection. you might also apply a ten occasions rule, nevertheless it’s now not frequently dependable in relation to advanced obligations.

In the event you end that the accessible details isn’t sufficient and it’s inconceivable or too pricey to gather the required actual-world statistics, try to observe one of many scaling methods. it may be info augmentation, artificial info period, or switch learning — relying to your mission needs and price range.

Dataset in Machine Studying

Dataset in Machine Studying. 24x7offshoring offers AI expertise constructed into our packages, empowering your buying and selling firm processes with AI. It truly is as intuitive as it’s versatile and highly effective. regardless that 24x7offshoring ‘s unwavering dedication to accountability ensures thoughtfulness and compliance in each interplay.

24x7offshoring ‘s enterprise AI , tailor-made to your explicit knowledge panorama and the nuances of your trade, allows smarter selections and efficiencies at scale:

- Added AI within the context of your enterprise procedures.

- AI educated on the trade’s broadest enterprise knowledge units.

- AI based mostly on ethics and privateness of statistics. requirements

The standard definition of synthetic intelligence is the expertise and engineering required to make clever machines. System cognition is a subfield or department of AI that entails advanced algorithms together with neural networks, selection bushes, and huge language fashions (LLMs) with dependent, unstructured knowledge to find out outcomes.

From these algorithms, classifications or predictions are made based mostly fully on sure enter requirements. Examples of system research are suggestion engines, facial recognition frameworks, and standalone engines.

Product Advantages Whether or not you’re seeking to enhance your buyer expertise, enhance productiveness, optimize enterprise programs, or speed up innovation, Amazon Net Merchandise (AWS) provides probably the most complete set of synthetic intelligence (AI) companies. to satisfy your enterprise wants.

AI companies pre-trained and ready to make use of pre-qualified fashions delivered with the assistance of AI choices of their packages and workflows.

By consistently learning APIs as a result of we use the identical deep studying expertise that powers Amazon.com and our machine studying companies, you get the perfect accuracy by consistently studying APIs.

No ML expertise desired

With AI choices conveniently accessible, you possibly can add AI capabilities to your enterprise applications (no ML expertise required) to deal with frequent enterprise challenges.

A creation for programs studying. Data units and assets.

Machine studying is without doubt one of the latest subjects in expertise. The idea has been round for many years, however the dialog is now heating up towards its use in every thing from Web searches and spam filters to engines like google and autonomous automobiles. Machine studying about education is a technique by which gadget intelligence is educated with recording items.

To do that accurately, it’s important to have a big sort of information units at your disposal. Fortuitously, there are numerous assets for datasets to study in regards to the system, together with public databases and proprietary datasets.

What are machine insights into knowledge units?

Machine studying knowledge units are important for the gadget to know algorithms to check from. A knowledge set is an instance of how the examine of programs permits predictions to be made, with labels that represent the results of a given prediction (achievement or failure). One of the simplest ways to begin gaining gadget information is through the use of libraries like Scikit-analyze or Tensorflow, which assist you accomplish most duties with out writing code.

There are three predominant forms of gadget mastery methods: supervised (studying from examples), unsupervised (studying by means of grouping), and gaining information by reinforcement (rewards). Supervised mastering is the follow of instructing a pc a approach to perceive types in statistics. Methods utilizing supervised area algorithms include: random forest, nearest associates, giant quantity vulnerable regulation, ray tracing algorithm, and SVM ruleset.

Gadgets that derive information from knowledge units are available many alternative kinds and could be obtained from quite a lot of locations. Textual logs, picture statistics, and sensor statistics are the three most typical forms of system studying knowledge units. A knowledge set is definitely a set of knowledge that can be utilized to make predictions about future actions or penalties based mostly on historic information. Knowledge units are sometimes labeled earlier than they can be utilized by gadget studying algorithms in order that the rule set is aware of what remaining outcomes to anticipate or classify as an anomaly.

For instance, if you wish to predict whether or not a buyer would possibly churn or not, you possibly can label your knowledge set as “churned” and “now not churned” in order that the system that learns the rule set can search additional information. Machine studying datasets could be created from any knowledge supply, even when that info is unstructured. For instance, you possibly can take all of the tweets that point out your organization and use them as a tool studying knowledge set.

To study extra about machine studying and its origins, learn our weblog posted on the gadget studying listing.

What are the info set types?

- A tool for buying information a few knowledge set divided into coaching, validation and testing knowledge units.

- A knowledge set could be divided into three parts: coaching, validation and testing.

- A tool for studying an information set is a set of details which have been organized into coaching, validation, and try items. Automated mastery generally makes use of these knowledge units to show algorithms tips on how to acknowledge patterns in information.

- The education set is the details that make it simple to coach the algorithm about what to search for and a approach to acknowledge it after seeing it in numerous units of details.

- A validation set is a gaggle of acknowledged and correct statistics towards which the algorithm could be examined.

- The check set is the last word assortment of unknown knowledge from which efficiency could be measured and modified accordingly.

Why do you want knowledge units in your model of AI?

System studying knowledge units are important for 2 causes: they assist you train your gadget to study your fashions, and so they present a benchmark for measuring the accuracy of your fashions. Knowledge units can be found in quite a lot of sizes and types, so it is very important choose one that’s acceptable for the problem at hand.

Machine mastering fashions are so simple as the knowledge they’re educated on. The extra info you may have, the upper your model might be. That’s why it’s essential to have numerous knowledge units processed whereas working AI initiatives, so you possibly can practice your model accurately and get top-notch outcomes.

There are quite a few distinctive forms of gadgets for studying knowledge units. A number of the most typical embody textual content material knowledge, audio statistics, video statistics, and photograph statistics. Every sort of knowledge has its personal particular set of use circumstances.

Textual content material statistics are a terrific choice for applications that need to perceive pure language. Examples embody chatbots and sentiment evaluation.

Audio knowledge units are used for a variety of functions, together with bioacoustics and sound modeling. They could even be helpful in pc imaginative and prescient, speech reputation, or musical info retrieval.

Video knowledge units are used to create superior digital video manufacturing software program, together with movement monitoring, facial recognition, and 3D rendering. They can be created for the operate of accumulating knowledge in actual time.

Photograph datasets are used for quite a lot of totally different features, together with photograph compression and recognition, speech synthesis, pure language processing, and extra.

What makes a terrific knowledge set?

A very good machine for studying an information set has a number of key traits: it’s giant sufficient to be consultant, extremely satisfying, and related to the duty at hand.

Options of a Nice Machine Mastering a Knowledge Set Options of a Good Knowledge Set for a Machine Gaining information about amount is necessary since you want sufficient statistics to show your rule set accurately. Passable is crucial to keep away from issues of bias and blind spots in statistics.

In the event you don’t have sufficient info, you danger overfitting your model; that’s, educating it so nicely with the accessible knowledge that it performs poorly when utilized to new examples. In such circumstances, it’s at all times a good suggestion to seek the advice of a statistical scientist. Relevance and insurance coverage are key components that shouldn’t be forgotten when accumulating statistics. Use actual details if doable to keep away from issues with bias and blind spots in statistics.

In abstract: a terrific programs administration knowledge set comprises variables and capabilities that may be precisely based mostly, has minimal noise (no irrelevant info), is scalable to numerous knowledge factors, and is simple to work with.

Relating to statistics, there are numerous totally different property that you should utilize in your gadget to realize insights into the info set. The commonest sources of statistics are internet and AI-generated knowledge. Nevertheless, different sources embody knowledge units from private and non-private teams or particular person teams that gather and share info on-line.

An necessary issue to remember is that the format of the info will have an effect on the readability or problem of making use of the said knowledge. Distinctive file codecs can be utilized to gather statistics, however not all codecs are appropriate for the machine to acquire knowledge in regards to the fashions. For instance, textual content paperwork are simple to learn however don’t comprise details about the variables being collected.

Then again, csv (comma separated values) paperwork have each the textual content and numeric information in a single area, making them handy for gadget management fashions.

It’s additionally essential to make sure that your knowledge set’s formatting stays constant as individuals exchange it manually utilizing distinctive individuals. This prevents discrepancies from occurring when utilizing an information set that has been up to date through the years. In your model of machine studying to be correct, you want fixed enter information.

Prime 20 Free Machine Consciousness Dataset Sources, Prime 20 Free ML Datasets, Prime 20 Free ML Datasets Associated to Machine Consciousness, Logs are Key . With out info, there could be no fashions of fashions or acquired information. Fortuitously, there are numerous assets from which you’ll be able to acquire free knowledge units for the system to study.

The extra information you may have whereas coaching, the higher, though statistics alone are usually not sufficient. It’s equally necessary to make sure that knowledge units are mission-relevant, accessible, and top-notch. To start out, it’s essential to make it possible for your knowledge units are usually not inflated. You’ll in all probability have to spend a while cleansing up the knowledge if it has too many rows or columns for what you need to accomplish for the duty.

To keep away from the trouble of sifting by means of all of the choices, we’ve compiled an inventory of the highest 20 free knowledge units in your gadget to study.

The 24x7offshoring platform’s datasets are geared up to be used with many fashionable gadget studying frameworks. The information units are correctly organized and up to date often, making them a precious useful resource for anybody in search of attention-grabbing knowledge.

If you’re in search of knowledge units to coach your fashions, then there isn’t any higher place than 24x7offshoring . With greater than 1 TB of information accessible and frequently up to date with the assistance of a dedicated community that contributes new code or enter information that additionally assist kind the platform, it is going to be troublesome so that you can now not discover what you want accurately. right here!

UCI Machine buying information of the Repository

The UCI Machine Area Repository is a dataset supply that comes with a collection of fashionable datasets within the gadget studying group. The information units produced by this challenge are of wonderful high quality and can be utilized for quite a few duties. The patron-contributed nature signifies that not all knowledge units are 100% clear, however most have been rigorously chosen to satisfy particular needs with none main points.

If you’re in search of giant knowledge drives which can be prepared to be used with 24x7offshoring choices , look no additional than the 24x7offshoring public knowledge set repository . The information units listed below are organized round particular use circumstances and are available preloaded with instruments that pair with the 24x7offshoring platform .

Google Dataset Search

Google Dataset Search is a really new instrument that makes it simple to find datasets no matter their supply. Knowledge units are listed based on metadata dissemination, making it simple to seek out what you’re in search of. Whereas the selection isn’t as sturdy as a few of the different choices on this listing, it’s evolving every single day.

eu open knowledge portal

The ECU Union Open Data Portal is a one-stop store for all of your statistical wants. It offers datasets printed by many distinctive establishments inside Europe and in 36 totally different nations. With an easy-to-use interface that means that you can seek for particular classes, this web site has every thing any researcher may need to uncover whereas looking for public area information.

Finance and economics knowledge units

The forex zone has embraced open-fingered gadget studying, and it’s no surprise why. In comparison with different industries the place knowledge could be tougher to seek out, finance and economics provide a trove of statistics that’s best for AI fashions that need to predict future outcomes based mostly on previous efficiency outcomes.

Knowledge units of this sort can help you predict issues like stock prices, financial indicators, and change costs.

24x7offshoring offers entry to monetary, financial and alternative knowledge units. Statistics can be found in distinctive codecs:

● time sequence (date/time stamp) and

● tables: numeric/care varieties together with strings for individuals who want them

The worldwide financial institution, the sector’s monetary establishment, is a helpful useful resource for anybody who needs to get an concept of world occasions, and this statistics financial institution has every thing from inhabitants demographics to key indicators which may be related in improvement charts. It’s open with out registration so you possibly can entry it comfortably.

Open knowledge from worldwide monetary establishments is the suitable supply for large-scale assessments. The knowledge it comprises consists of inhabitants demographics, macroeconomic statistics, and key indicators of enchancment that may assist you perceive how the world’s nations are faring on varied fronts.

Photographic Datasets/Laptop Imaginative and prescient Datasets

{A photograph} is value 1000 phrases, and that is particularly related within the matter of pc imaginative and prescient. With the rise in status of self-driving vehicles, facial recognition software program is more and more used for cover functions. The scientific imaging trade additionally depends on databases containing photographs and films to successfully diagnose affected person conditions.

Free photograph log unit picture datasets can be utilized for facial reputation

The 24x7offshoring dataset comprises a whole bunch of 1000’s of colour images that are perfect for educating photograph classification fashions. Whereas this dataset is most frequently used for academic analysis, it is also used to show machine studying fashions for business functions.

Pure Language Processing Datasets

The present cutting-edge in gadget understanding has been utilized to all kinds of fields together with voice and speech status, language translation, and textual content evaluation. Knowledge units for pure language processing are sometimes giant in measurement and require quite a lot of computing energy to show machine studying fashions.

You will need to keep in mind earlier than buying an information set with regards to system studying, statistics is essential. The extra statistics you may have, the higher your fashions will carry out. however not all info is equal. Earlier than buying an information set in your system studying challenge, there are a number of issues to recollect:

Tips earlier than buying a data set

Plan your mission rigorously earlier than buying an information set due to the fact: not all knowledge units are created equal. Some knowledge units are designed for analysis functions, whereas others are meant for program manufacturing. Be certain that the info set you buy matches your needs.

Sort and friendliness of statistics: not all knowledge is of the identical sort both. Be certain that the info set comprises info in order that one could be relevant to your organization.

Relevance to your enterprise: Knowledge units could be terribly huge and complex, so be certain the information are related to your particular mission. In the event you’re engaged on a facial reputation system, for instance, don’t purchase a photograph dataset that’s greatest made up of vehicles and animals.

By way of gadget studying, the phrase “one measurement doesn’t match all” is particularly related. That’s why we provide custom-designed knowledge units that may match the distinctive needs of your enterprise enterprise.

Excessive-quality knowledge units for system studying by buying gadget information

Knowledge units for system studying and artificial intelligence are essential to producing results. To realize this, you want entry to giant portions of discs that meet all the necessities of your explicit mastering objective. That is usually one of the troublesome duties when working a machine studying challenge.

At 24x7offshoring , we perceive the significance of information and have collected a large worldwide crowd of 4.5 million 24x7offshoring that can assist you put together your knowledge units. We provide all kinds of information units in particular codecs, together with textual content, images, and films. Better of all, you will get a quote in your {custom} knowledge set mastering system by clicking the hyperlink under.

There are hyperlinks to find extra about machine studying datasets, plus details about our crew of specialists who can assist you get began rapidly and simply.

Fast Suggestions for Your Machine When Learning the Company

1. Be certain that all info is labeled successfully. This consists of the enter and output variables in your model.

2. Keep away from utilizing non-representative samples whereas educating your fashions.

3. Use a collection of knowledge units if you wish to train your fashions effectively.

4. Choose knowledge units which may be relevant to your drawback area.

5. Statistics preprocessing: in order that it’s ready for modeling functions.

6. Watch out when deciding on system examine algorithms; Not all algorithms are appropriate for all sorts of information units. Information of the

remaining system turns into more and more important in our society.

It’s not only for large males, although: all companies can profit from gadget studying. To get began, you need to discover a good knowledge set and database. Upon getting them, your scientists and logging engineers can take their duties to the following stage. In the event you’re caught on the knowledge assortment stage, it could be value reconsidering the way you technically gather your statistics.

What’s an on-device dataset and why is it essential to your AI model?

In keeping with the Oxford Dictionary, a definition of an information set within the computerized area is “a gaggle of information that’s managed as a single unit by means of a laptop computer pc.” Due to this, an information set features a sequence of separate information, however can be utilized to show the system the algorithm for locating predictable types throughout the whole knowledge set.

Knowledge is an important element of any AI mannequin and principally the one purpose for the rise in reputation of the machine area that we’re witnessing today. Because of the supply of knowledge, scalable ML algorithms grew to become viable as actual merchandise that may generate income for a business firm, slightly than as a element of its major processes.

Your online business has at all times been utterly data-driven. Components consisting of what the client offered, by-product recognition, and the seasonality of shopper drift have at all times been important in enterprise creation. Nevertheless, with the appearance of system information, it’s now important to include this knowledge into knowledge units.

Adequate volumes of information will can help you analyze hidden traits and types and make selections based mostly on the info set you may have created. Nevertheless, though it could appear easy sufficient, working with knowledge is extra advanced. It requires enough remedy of the knowledge accessible, from the purposes of using an information set to the coaching of the uncooked info in order that it’s clearly usable.

Splitting Your Data: Schooling, Testing, and Validation Knowledge Units In system studying , an information set is usually now not used only for coaching features. A single schooling set that has already been processed is normally divided into a number of types of information units in system studying, which is critical to confirm how nicely the mannequin coaching was carried out.

Because of this, a check knowledge set is normally separated from the info. Subsequent, a validation knowledge set, whereas not strictly necessary, may be very helpful to keep away from coaching your algorithm on the identical sort of information and making biased predictions.

Collect. The very first thing it’s essential to do whereas looking for an information set is to pick the property you’ll use for ML log assortment. There are usually three forms of sources you possibly can select from: freely accessible open supply knowledge units, the Web, and artificial log factories. Every of those assets has its professionals and cons and must be utilized in particular circumstances. We’ll speak about this step in additional element within the subsequent section of this text.

Preprocess. There’s a precept in info expertise that each educated skilled adheres to. Begin by answering this query: has the info set you’re utilizing been used earlier than? If not, anticipate that this knowledge set is defective. If that’s the case, there’s nonetheless a excessive likelihood that you’ll want to re-adapt the set to your particular wants. After we cowl the sources, we’ll discuss extra in regards to the features that symbolize an acceptable knowledge set (you possibly can click on right here to leap to that part now).

Annotate. When you’ve made certain your info is clear and related, you might also need to be certain it’s comprehensible to a pc. Machines don’t perceive statistics in the identical manner as people (they aren’t capable of assign the identical that means to pictures or phrases as we do).

This step is the place quite a lot of corporations usually resolve to outsource the duty to skilled statistical labeling choices, when it’s thought of that hiring a educated annotation skilled is usually not possible. We’ve a terrific article on tips on how to construct an in-house labeling crew versus outsourcing this job that can assist you perceive which manner is greatest for you.

The Finest sort of information does machine studying want? Datasets Datasets. Optimize procedures, scale back steering efforts, and acquire highly effective insights and predictions with complete AI capabilities constructed proper into your 24x7offshoring buying and selling packages. A cat on a pc display representing 24x7offshoring enterprise AI . place marker Empower enterprise efficiency relevantly from day one with AI … Learn extra

How do individuals create the Finest datasets?

https://24x7offshoring.com/how-do-people-create-the-best-datasets-2/?feed_id=121697&_unique_id=667ada5e139bb

https://24x7offshoring.com/wp-content/uploads/2023/11/free-Picture-Datasets-for-Laptop-Vision3.png

#AIschoolingrecordsunits #Knowledge #Datasets #machinelearning #systemthatacquiresknowledge

https://24x7offshoring.com/how-do-people-create-the-best-datasets-2/?feed_id=121697&_unique_id=667ada5e139bb https://24x7offshoring.com/how-do-people-create-the-best-datasets-2/?feed_id=121697&_unique_id=667ada5e139bb #dataservice dataservice, AIschoolingrecordsunits, Knowledge, Datasets, machinelearning, systemthatacquiresknowledge