Suppose we wish to detect the presence or absence of one thing like a cat or a spam message, then we’d want a binary classifier that may differentiate between two courses, as an example, a cat classifier distinguishes between “CAT” and “NOT CAT”, and a spam classifier identifies “SPAM” and “NOT SPAM” courses.

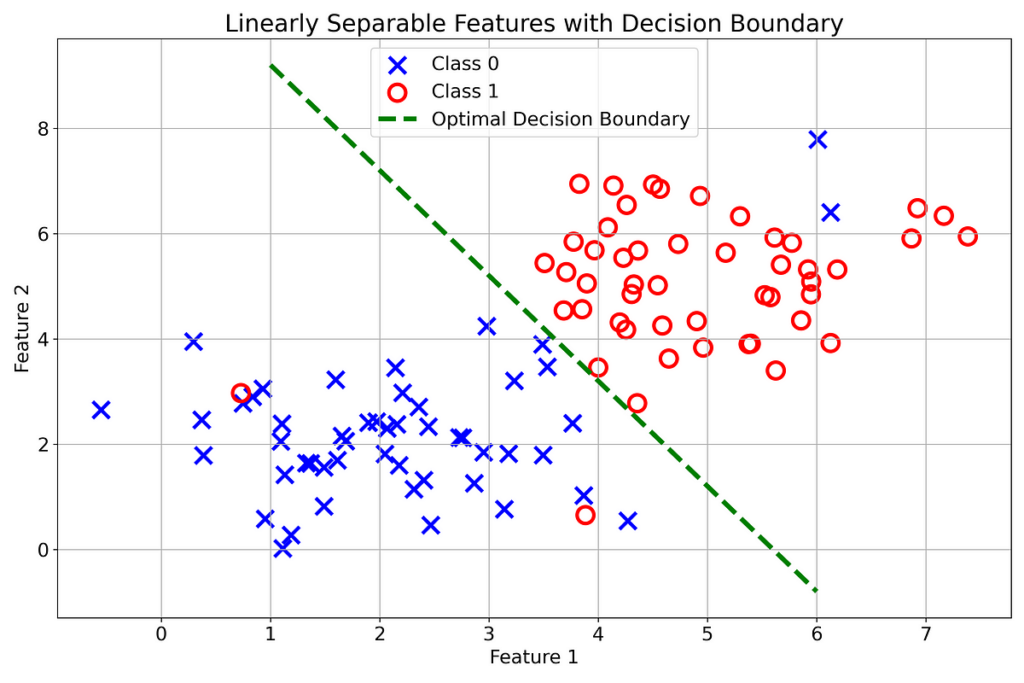

If you’re fortunate sufficient and the options of your courses are effectively separated, like in Fig. 1, then you definately could possibly use the Logistic Regression mannequin which defines a linear boundary (just like the inexperienced dotted line in Fig. 1) to differentiate between the 2 courses.

The instance proven in Fig. 1 has a 1-D determination boundary (A Line) because the courses have solely two options that may be separated by a 1-D boundary and we’ll see how. The equation of this determination boundary is given as:

Variables Description:

𝑥₁: Function 1

𝑥₂: Function 2

w₁: Weight worth equivalent to Function 1

w₂: Weight worth equivalent to Function 2

b: Bias or offset

The perfect determination boundary is the one which separates the 2 courses wholly such that there are “CLASS 0” situations on one aspect of the boundary and “CLASS 1” situations on the opposite aspect. Nonetheless, this won’t be attainable realistically as additionally demonstrated in Fig. 1 the place a number of examples of 1 class are intermingled with the opposite which aren’t separable. Therefore, an optimum determination boundary is the one which separates a lot of the situations of the 2 courses.

Each level (𝑥₁, 𝑥₂) that lies on the choice boundary, as proven in Fig. 2(b), is described by Eqn. 1. This boundary divides the 𝑥₁ 𝑥₂- airplane into two areas: the area beneath it and the area above it. Intuitively, we are able to know if a degree (𝑥₁, 𝑥₂) lies above the boundary or beneath it by merely substituting the purpose within the equation of the boundary, and if it produces a optimistic worth it means the purpose lies within the higher area and if the worth is damaging, the purpose lies beneath the boundary, as proven in Fig. 2 (a) and (c), respectively.

However what’s the level (𝑥₁, 𝑥₂)? It’s the pair of Function 1 and Function 2 and our objective is to place all options (𝑥₁, 𝑥₂) related to “CLASS 0” beneath the boundary and people related to “CLASS 1” above the boundary or vice versa and the equation of boundary will assist us to navigate by telling if the options lie on the anticipated aspect of the boundary or not.

Earlier than speaking about find out how to obtain an optimum determination boundary let’s generalize its dimensions.

For higher visualization and understanding, we’ve solely thought-about two options: 𝑥₁ and 𝑥₂, nonetheless, virtually, the variety of options could also be far larger than simply two.

What do you suppose, growing the variety of options will do to the choice boundary?

As we had courses with two options, we have been in a position to plot them in a 2-D airplane and a 1-D determination boundary (A Line) was in a position to separate them by defining two areas the place the 2 courses may reside (Fig. 1).

Suppose now our courses have 3 options 𝑥₁, 𝑥₂ and 𝑥₃ which should be plotted in a 3-D house, nonetheless, they will’t be separated by a line anymore, however a 2-D airplane as proven within the animated Fig.3 beneath:

The equation of a 2-D determination boundary (A airplane) is given as:

Usually, two courses with n-dimensional function house could be distinguished utilizing an (n-1)-dimensional hyperplane or determination boundary. Past 3-D function house, (n-1)- dimensional hyperplane can’t be imagined or visualized like a 1-D line or a 2-D airplane, nonetheless, its equation could be simply expressed as:

Utilizing matrix notation, we are able to succinctly write the equation as:

The idea of an (n-1)-dimensional hyperplane’s equation (Eqn. 5) producing a optimistic output, for the factors or options mendacity above it and a damaging output for these mendacity beneath it, seamlessly carries to the n-dimensional house.

In logistic regression, whether or not the enter function vector 𝑥 belongs to “CLASS 0” or “CLASS 1” is reported within the type of likelihood by utilizing the sigmoid perform. It’s given as:

The sigmoid perform outputs 0 for z = −∞, 0.5 when z = 0, and 1 for z = +∞. Since its vary is from 0 to 1, it serves properly as a likelihood perform. We enter the hyperplane equation (Eqn. 5) into the sigmoid perform and it maps the output of the hyperplane equation to the likelihood as:

Following the conference, we’ll put “CLASS 0” options beneath the boundary and “CLASS 1” options above it. Thence, an applicable criterion for the prediction could possibly be:

Think about the output of the sigmoid perform because the likelihood that the enter belongs to “CLASS 1”. Because of this if the output of the sigmoid perform is 0.8 then the mannequin has a confidence of 0.8 that the enter belongs to “CLASS 1” and, consequently, a confidence of 0.2 that it belongs to “CLASS 0”.

For the reason that sigmoid perform experiences likelihood, you could decide a price for the choice threshold apart from 0.5, primarily based in your precedence that’s whether or not you prioritize the ratio of the variety of appropriate predictions to the full variety of predictions made in favor of a specific class or the flexibility to detect a lot of the situations of that individual class. Learn extra about this right here:

The likelihood that an enter function vector x belongs to “CLASS 1”, given the mannequin parameters w and b is decided as:

Conversely, the likelihood that an enter function vector x belongs to “CLASS 0”, given the mannequin parameters w and b is decided as:

Combinedly, the likelihood that an enter function vector x belongs to class y (0 or 1), given the mannequin parameters w and b is calculated as:

Suppose y is the precise label of an instance from the coaching dataset then Eqn. 9 provides the likelihood estimated by the mannequin that the enter belongs to class y when the enter is understood to belong to class y or the precise label of the instance is y.

Therefore, we wish Eqn. 9 to supply a price near 1 as a result of it signifies that the mannequin holds a excessive confidence {that a} given enter belongs to “CLASS 0” when it really belongs to it. Equally, the mannequin outputs a excessive likelihood {that a} given enter belongs to “CLASS 1” when its precise label can be “CLASS 1”.

We will prolong this likelihood perform to the entire coaching dataset by utilizing the multiplication rule of likelihood, to provide the Chance perform as:

Variables Description:

y⁽ⁱ⁾: Label for iᵗʰ coaching instance (0 or 1)

x⁽ⁱ⁾: iᵗʰ coaching instance

m: Complete coaching examples

Chance tells how effectively the mannequin’s estimations align with the precise labels of the coaching dataset examples and our objective is to maximise it.

It’s handy to calculate the log of probability:

Making substitution from Eqn. 9:

Word that the probability, 𝓛(w, b), ranges from 0 to 1 which signifies that the log-likelihood, 𝓁(w, b), will vary from −∞ to 0. The place the values of probability, 𝓛(w, b), near zero signifies a extreme discrepancy between the mannequin’s estimations and the precise labels of the coaching knowledge. This poor efficiency interprets to massive damaging values of log-likelihood, 𝓁(w, b).

What if we negate the log-likelihood?

The vary of damaging log-likelihood will change into 0 to +∞. The place massive optimistic values point out an enormous error or mistake within the mannequin’s estimation and the values near zero signify that the mannequin’s estimations align intently with the precise labels of the coaching knowledge. Because of this damaging log-likelihood could be thought-about as an error, price, or loss perform, the place massive values imply massive error and small values sign small error.

The loss perform in logistic regression, aka Binary Cross Entropy, is the damaging of log-likelihood, normalized by the variety of coaching examples.

Making substitution from Eqn. 13:

Normalizing by the variety of coaching examples m provides the common loss and it prevents the dimensions of the coaching dataset from influencing the worth of loss. In consequence, the efficiency of various fashions could be in contrast simply.

Since our goal is to maximise the probability. We will obtain the identical impact utilizing an alternate and handy method and that’s minimizing the loss perform.

From Calculus you could do not forget that on the minimal of a perform, the gradient is zero. Discover that the loss perform depends on the mannequin parameters (w, b) and we are able to attain its minimal by various these parameter values.

Let’s discover the gradient of the loss perform w.r.t the load vector w and bias b in order that we are able to attain its minimal utilizing the gradient descent optimization algorithm.

The gradient of loss perform w.r.t j ᵗʰ ingredient of the load vector w is given as:

The gradient of sigmoid perform is given as:

To see the detailed derivation of the gradient of sigmoid perform, observe this hyperlink:

Making substitution from Eqn. 18 in Eqn. 17 and making use of chain rule:

Equally, the gradient of loss perform w.r.t the bias b could be computed as:

Making substitution from Eqn. 18 in Eqn. 25 and making use of chain rule:

The loss perform might appear to be this and we wish to attain the underside the place the loss is minimal. In approaching the minimal level of this loss perform, the gradient will assist. As you may see within the determine, to the left of the minimal level, the gradient is damaging, and to its proper, the gradient is optimistic, the place it’s zero on the minimal level.

Suppose you’re on the left of the minimal level, then to method it you must transfer to the fitting which quantities to growing the worth of wⱼ or b. Equally, in case you are on the proper of the minimal level, then you must transfer to the left to method it by lowering the worth of wⱼ or b. This technique of transferring in the other way of the gradient to cut back the fee is called gradient descent. Mathematically:

Right here α is the training price which determines the step measurement of wⱼ and b towards the minimal level. Its worth sometimes lies between 0 and 1.

After the updation of mannequin parameters wⱼ and b, the loss ought to be lowered as you’ve taken towards the minimal level of the loss perform.

Earlier than beginning the coaching course of, we’ll normalize the function values (all of the values of “𝑥” that exist within the coaching dataset) in order that they lie on a typical scale, sometimes round zero, which is important for clean convergence of the mannequin. There are a number of normalization strategies, we’ll go together with the Min-Max scaler:

- Put together the coaching and take a look at datasets.

- Normalize all of the options of the coaching dataset to deliver them on a typical scale utilizing Eqn. 33. Use the identical parameters calculated from the coaching set (𝑥ₘᵢₙ and 𝑥ₘₐₓ) to normalize the take a look at set.

- Begin with random values of weight vector and bias, sometimes round zero.

- Calculate the loss or binary cross entropy utilizing the coaching dataset utilizing Eqn. 15.

- Calculate the gradient of loss w.r.t all the load and bias values utilizing Eqn. 23 and Eqn. 30, respectively.

- Replace all the load and bias values concurrently utilizing Eqn. 31 and Eqn. 32, respectively.

- Return to step 4 and proceed till the loss stops displaying any important enchancment or repeat for a set variety of instances.

- Check the efficiency of the mannequin by calculating the loss, accuracy, precision, or recall utilizing the take a look at set.

I’ve carried out the logistic regression mannequin on the instance that has been mentioned on this article i-e binary classification with 3-D function house. Under are the outcomes and the hyperlink to the code:

The accuracy comes out to be 95% which is kind of spectacular, nonetheless, this was solely attainable as a result of the contrived knowledge factors have been quite simple and simply separable by a linear determination boundary.

Discover the code right here: