After I first ventured into the world of machine studying, I used to be mesmerized by the chances and the ability it holds to rework information into actionable insights. Like lots of you, I began with the fundamentals, diving into linear regression, choice timber, and neural networks. Nonetheless, as I delved deeper, I found an enchanting method that appeared to bridge the hole between simplicity and class — MARS , or Multivariate Adaptive Regression Splines.

On this article, I need to share my journey of discovering MARS Regression. I’ll stroll you thru what it’s, the way it works, and why it may be a precious addition to your machine studying toolkit. We may even dive right into a sensible instance utilizing Python, so you possibly can see MARS in motion and perceive the best way to apply it to your personal tasks.

Subsequently, whether or not you’re a seasoned information scientist seeking to broaden your repertoire or a passionate newcomer desperate to discover superior regression strategies, I hope this text will offer you precious insights and sensible information. Allow us to embark on this journey collectively and unlock the potential of MARS Regression.

“Multivariate Adaptive Regression Splines (MARS) is a type of regression evaluation launched by Jerome H. Friedman in 1991. It’s a non-parametric regression method that builds versatile fashions by becoming piecewise linear regressions”. Wikipedia

In different phrases, MARS, which stands for Multivariate Adaptive Regression Splines, is a robust regression method designed to mannequin advanced relationships in information. Not like conventional linear regression that assumes a straight-line relationship between predictors and the goal variable, MARS is able to modeling non-linear relationships and interactions amongst predictors with out making prior assumptions in regards to the type of these relationships. MARS splits the info into smaller areas, becoming a easy mannequin in every one, permitting for a versatile and adaptive strategy to regression evaluation.

Historical past and Growth

Jerome H. Friedman launched MARS Regression in 1991 to reinforce conventional regression strategies. By permitting for non-linearity and interactions within the mannequin, MARS gives a extra adaptable and highly effective strategy to analyzing advanced information relationships. This innovation marked a key development in regression evaluation.

Benefits

· Handles Non-Linear Relationships: MARS can seize advanced patterns in information that aren’t simply straight strains. For instance, if you’re predicting home costs, MARS can mannequin how costs change in another way at totally different sizes of homes.

· Captures Interplay Results: MARS can determine and mannequin interactions between variables. For instance, it could perceive how the mixed impact of home dimension and placement impacts the worth.

· Automated Mannequin Constructing: MARS robotically selects the necessary variables and interactions, making the modeling course of simpler.

· Interpretability: Whereas MARS fashions will be advanced, they’re usually extra interpretable than neural networks, which are sometimes thought of “black packing containers.” This implies you possibly can higher perceive how the mannequin is making its predictions.

Disadvantages

· Computational Complexity: MARS will be slower and require extra computing energy than easier fashions, particularly with giant datasets.

· Overfitting: As a result of MARS could be very versatile, it would match the coaching information too nicely, capturing noise fairly than the true sample. This will result in poorer efficiency on new information.

Foundation Capabilities

In MARS, foundation capabilities are the constructing blocks of the mannequin. They’re easy capabilities that assist describe the connection between the enter variables (like home dimension or variety of bedrooms) and the output variable (like home value).

Piecewise Linear Segments:

MARS makes use of piecewise linear segments, which implies it breaks the info into totally different sections and suits a straight line to every part. Think about becoming a number of straight strains to a winding highway as a substitute of utilizing one lengthy curve.

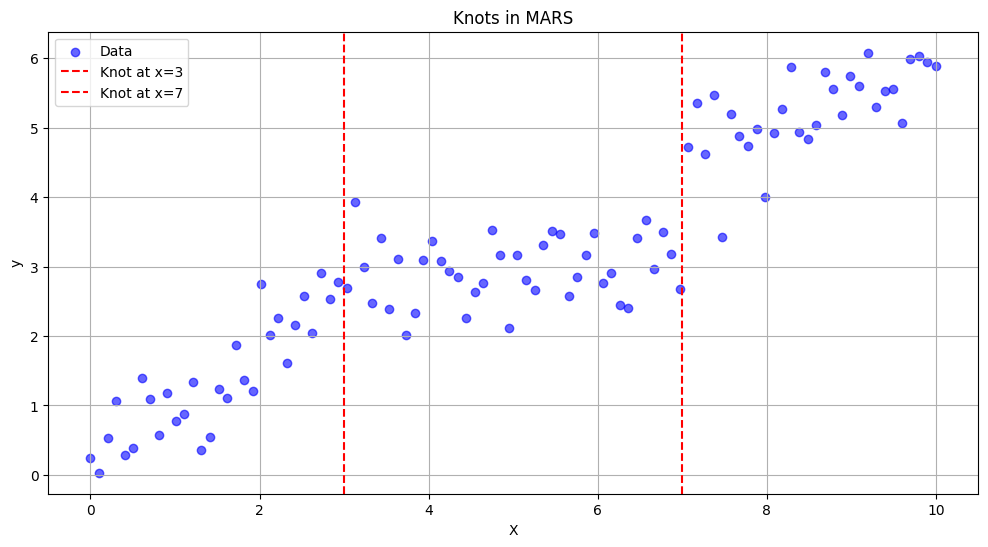

Knots

· Knots are the factors the place the info is break up to suit totally different piecewise linear segments. They act like hinges or joints the place the straight strains meet.

· Knots assist MARS to flexibly mannequin the info by permitting the strains to alter path at sure factors. This helps in capturing the underlying patterns extra precisely.

Ahead and Backward Cross

· Within the ahead cross, MARS begins with a easy mannequin and provides foundation capabilities step-by-step. It retains including these capabilities to enhance the mannequin’s match to the info. Consider it as constructing a LEGO construction piece by piece.

· Within the backward cross, MARS simplifies the mannequin by eradicating a few of the foundation capabilities that don’t contribute a lot to enhancing the mannequin. This helps in avoiding overfitting. Think about eradicating pointless components out of your LEGO construction to make it cleaner and extra environment friendly.

Set up Required Packages : First, be sure you have the mandatory packages put in. You’ll be able to set up them utilizing `set up.packages()` for those who haven’t already.

set up.packages("earth")

set up.packages("caret")

set up.packages("ggplot2")

Load Libraries

library(earth)

library(caret)

library(ggplot2)

Load the Dataset

# Load the dataset

information(mtcars)

# Examine the construction of the dataset

str(mtcars)

Implement MARS

# Cut up the info into coaching and testing units

set.seed(42)

train_index <- createDataPartition(mtcars$mpg, p = 0.7, checklist = FALSE)

train_data <- mtcars[train_index, ]

test_data <- mtcars[-train_index, ]# Prepare the MARS mannequin

mars_model <- earth(mpg ~ ., information = train_data)

# Predict on check information

y_pred <- predict(mars_model, newdata = test_data)

# Calculate Imply Squared Error

mse <- imply((test_data$mpg - y_pred)^2)

cat("Imply Squared Error:", mse, "n")

Visualize Outcomes

# Visualize precise vs predicted values

ggplot(information = test_data, aes(x = mpg, y = y_pred)) +

geom_point(alpha = 0.7) +

geom_abline(intercept = 0, slope = 1, coloration = "pink", linetype = "dashed") +

labs(x = "Precise Values", y = "Predicted Values", title = "Precise vs Predicted Values") + theme_minimal()

Abstract of the MARS mannequin

abstract(mars_model)

The abstract() operate for a MARS mannequin gives a abstract of the phrases (foundation capabilities) used within the mannequin, together with their coefficients (weights). Every foundation operate represents a particular linear mixture of predictors that contributes to the prediction.

Coefficients:

· Intercept: The bottom predicted worth of `mpg` when all predictors are zero is roughly 16.76.

· h(3.215-wt): For `wt` (weight in hundreds of kilos) lower than 3.215, there’s a optimistic linear relationship with `mpg`, contributing roughly 7.57 to the prediction of `mpg`.

· h(wt-3.215): For `wt` higher than 3.215, there’s a unfavorable linear relationship with `mpg`, decreasing the prediction by roughly 2.02 for every unit enhance in `wt`.

· h(qsec-17.4): For `qsec` (quarter mile time) higher than 17.4 seconds, there’s a optimistic linear relationship with `mpg`, contributing roughly 1.62 to the prediction of `mpg`.

Mannequin Choice:

· Chosen Phrases: The mannequin chosen 4 out of 11 phrases, indicating the significance of those phrases in predicting `mpg`.

· Chosen Predictors: 2 out of 10 predictors have been chosen as vital contributors to the mannequin.

Termination Situation:

· GRSq: The Generalized R-squared (GRSq) worth is 0.8039, indicating that the mannequin explains roughly 80.39% of the variance in `mpg`.

· GCV: The Generalized Cross Validation (GCV) rating is 6.9537, which is a measure of mannequin complexity and goodness-of-fit. Decrease values point out higher becoming fashions.

Interactions and Levels:

· The mannequin recognized 1 time period at diploma 1 (linear) and three phrases at diploma 3 (together with interactions).

Total Match:

· RSq: The standard R-squared (RSq) worth is 0.8929, indicating that the mannequin explains roughly 89.29% of the variance in `mpg`, which suggests a powerful match.

On this article, we explored MARS Regression, a versatile method that may deal with non-linear relationships and interactions between predictors. We lined its key ideas, comparable to foundation capabilities, knots, and the ahead and backward cross algorithms. We additionally mentioned its benefits, like dealing with advanced information patterns, and its disadvantages, like potential computational complexity. Lastly, we offered a sensible instance in R as an instance how MARS works in apply.

I encourage you to check out MARS Regression in your tasks. Whether or not you’re coping with finance, healthcare, advertising and marketing, or any subject with advanced information, MARS might help you reveal hidden patterns and make extra correct predictions. Give it a shot and see the way it can improve your information evaluation expertise!

Sources

1. Wikipedia — [Multivariate Adaptive Regression Splines] (https://en.wikipedia.org/wiki/Multivariate_adaptive_regression_spline )

2. Authentic Analysis Paper (http://www.stat.yale.edu/~lc436/08Spring665/Mars_Friedman_91.pdf)

Additional Studying

1. MARS in Python (https://machinelearningmastery.com/multivariate-adaptive-regression-splines-mars-in-python/)

2. Is MARS higher than Neural Networks? (https://www.casact.org/sites/default/files/database/forum_03spforum_03spf269.pdf)