Within the machine studying world, regression evaluation stays a foundational method for understanding the relationships between variables. Conventional linear regression, although highly effective, usually falls quick when coping with advanced knowledge that suffers from multicollinearity or when the mannequin dangers overfitting. That is the place Ridge and Lasso regression come into play, providing sturdy alternate options by means of the ability of regularization. Let’s dive into these two fascinating strategies and uncover how they will improve your predictive modeling toolkit.

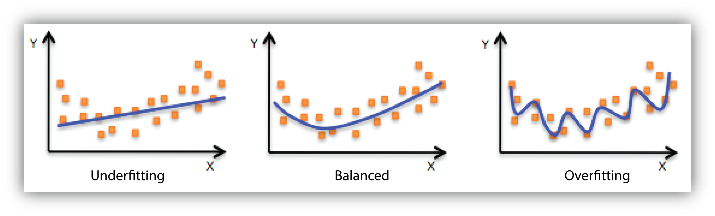

When constructing machine studying fashions, two frequent points that may come up are overfitting and underfitting. These ideas are essential to grasp as a result of they instantly affect the mannequin’s efficiency on new, unseen knowledge.

What’s Overfitting?

Overfitting occurs when a mannequin learns not solely the underlying patterns within the coaching knowledge but in addition the noise and random fluctuations. Consequently, the mannequin performs very effectively on the coaching knowledge however poorly on new, unseen knowledge.

Overfitting fashions have Low Bias and Excessive Variance

What’s Underfitting?

Underfitting happens when a mannequin is simply too easy to seize the underlying patterns within the knowledge. It fails to study the coaching knowledge adequately and, consequently, performs poorly on each the coaching knowledge and new knowledge.

Underfitting fashions have Excessive Bias and Excessive Variance

Our goal is to construct a mannequin with Low Bias and Low Variance

What’s Regularization?

Regularization is a method used to forestall overfitting by including a penalty to the mannequin for having too many parameters. This penalty discourages the mannequin from changing into too advanced, which helps enhance its generalization to new, unseen knowledge. The first types of regularization are Ridge regression and Lasso regression, every with its distinctive method to imposing this penalty.

What’s Ridge Regression (L2 Regularization)?

Ridge regression, addresses multicollinearity by including a penalty equal to the sum of the squares of the coefficients. This technique shrinks the coefficients, however by no means to precisely zero, permitting all variables to contribute to the prediction.

To grasp this we first want to have a look at the fee operate of linear regression

In line with this value operate when the info is overfitting Eg. Let’s take overfitting as 100%. That implies that the most effective match line predicts all of the values precisely appropriately, therefore the imply sq. error = 0.

This fashion if we feed new knowledge to this mannequin will probably be extra inaccurate.

Therefore to treatment overfitting we use ridge regression.

Ridge regression provides a parameter to the linear regression value operate to keep away from overfitting so the fee operate of ridge regression appears to be like like this

Right here lambda is the penalizing parameter which penalizes overfitting knowledge making our mannequin work higher on new knowledge.

If λ = 0 then the fee operate of Ridge Regression and the fee operate of linear regression is identical.

What’s Lasso Regression (L1 regularization)?

Lasso regression (Least Absolute Shrinkage and Choice Operator) introduces a penalty equal to absolutely the worth of the magnitude of coefficients.

The principle goal of Lasso Regression is to cut back the options and therefore can be utilized for Characteristic Choice.

The fee operate for lasso regression appears to be like like this:

If we’ve many options which are irrelevant in our mannequin then their slopes could be very small. For the reason that slopes are very small they ultimately get uncared for in the fee operate of lasso regression and therefore characteristic choice is carried out.

Ridge vs. Lasso: Key Variations

- Penalty: Ridge makes use of L2 regularization (squares of the coefficients), whereas Lasso makes use of L1 regularization (absolute values of the coefficients).

- Impact on Coefficients: Ridge regression shrinks coefficients however doesn’t set any to zero. Lasso regression can shrink some coefficients to zero, successfully performing variable choice.

- Mannequin Complexity: Ridge regression is helpful when all predictors needs to be included with low-impact. Lasso regression simplifies the mannequin by excluding much less essential predictors.

Sensible implementation of Ridge and Lasso Regression

# Import essential libraries

import numpy as np

import pandas as pd

import seaborn as sns

from sklearn.model_selection import train_test_split, cross_val_score, GridSearchCV

from sklearn.linear_model import Ridge, Lasso

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import mean_squared_error, r2_score# Load the guidelines dataset from seaborn

suggestions = sns.load_dataset('suggestions')

# Put together the options and goal variable

X = suggestions[['total_bill', 'size']]

y = suggestions['tip']

# Break up the info into coaching and testing units

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Standardize the options

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.remodel(X_test)

# Outline the parameter grid for alpha

alpha_values = {'alpha': np.logspace(-4, 4, 50)}

# Ridge Regression with GridSearchCV

ridge = Ridge()

ridge_cv = GridSearchCV(ridge, alpha_values, cv=5, scoring='r2')

ridge_cv.match(X_train_scaled, y_train)

# Greatest Ridge mannequin

ridge_best = ridge_cv.best_estimator_

ridge_pred = ridge_best.predict(X_test_scaled)

# Consider Ridge Regression

ridge_mse = mean_squared_error(y_test, ridge_pred)

ridge_r2 = r2_score(y_test, ridge_pred)

ridge_best_alpha = ridge_cv.best_params_['alpha']

print(f"Ridge Regression MSE: {ridge_mse}")

print(f"Ridge Regression R-squared: {ridge_r2}")

print(f"Greatest Ridge alpha: {ridge_best_alpha}")

# Lasso Regression with GridSearchCV

lasso = Lasso(max_iter=10000) # Enhance max_iter to make sure convergence

lasso_cv = GridSearchCV(lasso, alpha_values, cv=5, scoring='r2')

lasso_cv.match(X_train_scaled, y_train)

# Greatest Lasso mannequin

lasso_best = lasso_cv.best_estimator_

lasso_pred = lasso_best.predict(X_test_scaled)

# Consider Lasso Regression

lasso_mse = mean_squared_error(y_test, lasso_pred)

lasso_r2 = r2_score(y_test, lasso_pred)

lasso_best_alpha = lasso_cv.best_params_['alpha']

print(f"Lasso Regression MSE: {lasso_mse}")

print(f"Lasso Regression R-squared: {lasso_r2}")

print(f"Greatest Lasso alpha: {lasso_best_alpha}")