Introduction

Within the subject of machine studying, creating sturdy and correct predictive fashions is a main goal. Ensemble studying strategies excel at enhancing mannequin efficiency, with bagging, brief for bootstrap aggregating, taking part in a vital function in decreasing variance and bettering mannequin stability. This text explores bagging, explaining its ideas, functions, and nuances, and demonstrates the way it makes use of a number of fashions to enhance prediction accuracy and reliability.

Overview

- Perceive the basic idea of Bagging and its goal in decreasing variance and enhancing mannequin stability.

- Describe the steps concerned in placing Bagging into apply, equivalent to making ready the dataset, bootstrapping, coaching the mannequin, producing predictions, and merging predictions.

- Acknowledge the numerous advantages of bagging, together with its means to cut back variation, mitigate overfitting, stay resilient within the face of outliers, and be utilized to a wide range of machine studying issues.

- Achieve sensible expertise by implementing Bagging for a classification job utilizing the Wine dataset in Python, using the scikit-learn library to create and consider a BaggingClassifier.

What’s Bagging?

Bagging is a machine studying ensemble methodology aimed toward bettering the reliability and accuracy of predictive models. It includes producing a number of subsets of the coaching information utilizing random sampling with substitute. These subsets are then used to coach a number of base fashions, equivalent to choice bushes or neural networks.

When making predictions, the outputs from these base fashions are mixed, typically via averaging (for regression) or voting (for classification), to supply the ultimate prediction. Bagging reduces overfitting by creating variety among the many fashions and enhances general efficiency by reducing variance and growing robustness.

Implementation Steps of Bagging

Right here’s a normal define of implementing Bagging:

- Dataset Preparation: Clear and preprocess your dataset. Cut up it into coaching and take a look at units.

- Bootstrap Sampling: Randomly pattern from the coaching information with substitute to create a number of bootstrap samples. Every pattern usually has the identical measurement as the unique dataset.

- Mannequin Coaching: Prepare a base mannequin (e.g., choice tree, neural community) on every bootstrap pattern. Every mannequin is educated independently.

- Prediction Era: Use every educated mannequin to foretell the take a look at information.

- Combining Predictions: Combination the predictions from all fashions utilizing strategies like majority voting for classification or averaging for regression.

- Analysis: Assess the ensemble’s efficiency on the take a look at information utilizing metrics like accuracy, F1 rating, or imply squared error.

- Hyperparameter Tuning: Alter the hyperparameters of the bottom fashions or the ensemble as wanted, utilizing strategies like cross-validation.

- Deployment: As soon as glad with the ensemble’s efficiency, deploy it to make predictions on new information.

Additionally Learn: Top 10 Machine Learning Algorithms to Use in 2024

Understanding Ensemble Studying

To extend efficiency general, ensemble studying integrates the predictions of a number of fashions. By combining the insights from a number of fashions, this methodology often produces forecasts which might be extra correct than these of anyone mannequin alone.

Well-liked ensemble strategies embody:

- Bagging: Includes coaching a number of base fashions on totally different subsets of the coaching information created via random sampling with substitute.

- Boosting: A sequential methodology the place every mannequin focuses on correcting the errors of its predecessors, with in style algorithms like AdaBoost and XGBoost.

- Random Forest: An ensemble of choice bushes, every educated on a random subset of options and information, with last predictions made by aggregating particular person tree predictions.

- Stacking: Combines the predictions of a number of base fashions utilizing a meta-learner to supply the ultimate prediction.

Advantages of Bagging

- Variance Discount: By coaching a number of fashions on totally different information subsets, Bagging reduces variance, resulting in extra steady and dependable predictions.

- Overfitting Mitigation: The range amongst base fashions helps the ensemble generalize higher to new information.

- Robustness to Outliers: Aggregating a number of fashions’ predictions reduces the impression of outliers and noisy information factors.

- Parallel Coaching: Coaching particular person fashions may be parallelized, rushing up the method, particularly with giant datasets or complicated fashions.

- Versatility: Bagging may be utilized to numerous base learners, making it a versatile method.

- Simplicity: The idea of random sampling with substitute and mixing predictions is simple to know and implement.

Functions of Bagging

Bagging, often known as Bootstrap Aggregating, is a flexible method used throughout many areas of machine studying. Right here’s a have a look at the way it helps in numerous duties:

- Classification: Bagging combines predictions from a number of classifiers educated on totally different information splits, making the general outcomes extra correct and dependable.

- Regression: In regression issues, bagging helps by averaging the outputs of a number of regressors, resulting in smoother and extra correct predictions.

- Anomaly Detection: By coaching a number of fashions on totally different information subsets, bagging improves how nicely anomalies are noticed, making it extra immune to noise and outliers.

- Function Choice: Bagging may help establish an important options by coaching fashions on totally different function subsets. This reduces overfitting and improves mannequin efficiency.

- Imbalanced Knowledge: In classification issues with uneven class distributions, bagging helps steadiness the lessons inside every information subset. This results in higher predictions for much less frequent lessons.

- Constructing Highly effective Ensembles: Bagging is a core a part of complicated ensemble strategies like Random Forests and Stacking. It trains various fashions on totally different information subsets to realize higher general efficiency.

- Time-Sequence Forecasting: Bagging improves the accuracy and stability of time-series forecasts by coaching on numerous historic information splits, capturing a wider vary of patterns and traits.

- Clustering: Bagging helps discover extra dependable clusters, particularly in noisy or high-dimensional information. That is achieved by coaching a number of fashions on totally different information subsets and figuring out constant clusters throughout them.

Bagging in Python: A Temporary Tutorial

Allow us to now discover tutorial on bagging in Python.

# Importing mandatory libraries

from sklearn.ensemble import BaggingClassifier

from sklearn.tree import DecisionTreeClassifier

from sklearn.datasets import load_wine

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

# Load the Wine dataset

wine = load_wine()

X = wine.information

y = wine.goal

# Cut up the dataset into coaching and testing units

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3,

random_state=42)

# Initialize the bottom classifier (on this case, a choice tree)

base_classifier = DecisionTreeClassifier()

# Initialize the BaggingClassifier

bagging_classifier = BaggingClassifier(base_estimator=base_classifier,

n_estimators=10, random_state=42)

# Prepare the BaggingClassifier

bagging_classifier.match(X_train, y_train)

# Make predictions on the take a look at set

y_pred = bagging_classifier.predict(X_test)

# Calculate accuracy

accuracy = accuracy_score(y_test, y_pred)

print("Accuracy:", accuracy)

This instance demonstrates easy methods to use the BaggingClassifier from scikit-learn to carry out Bagging for classification duties utilizing the Wine dataset.

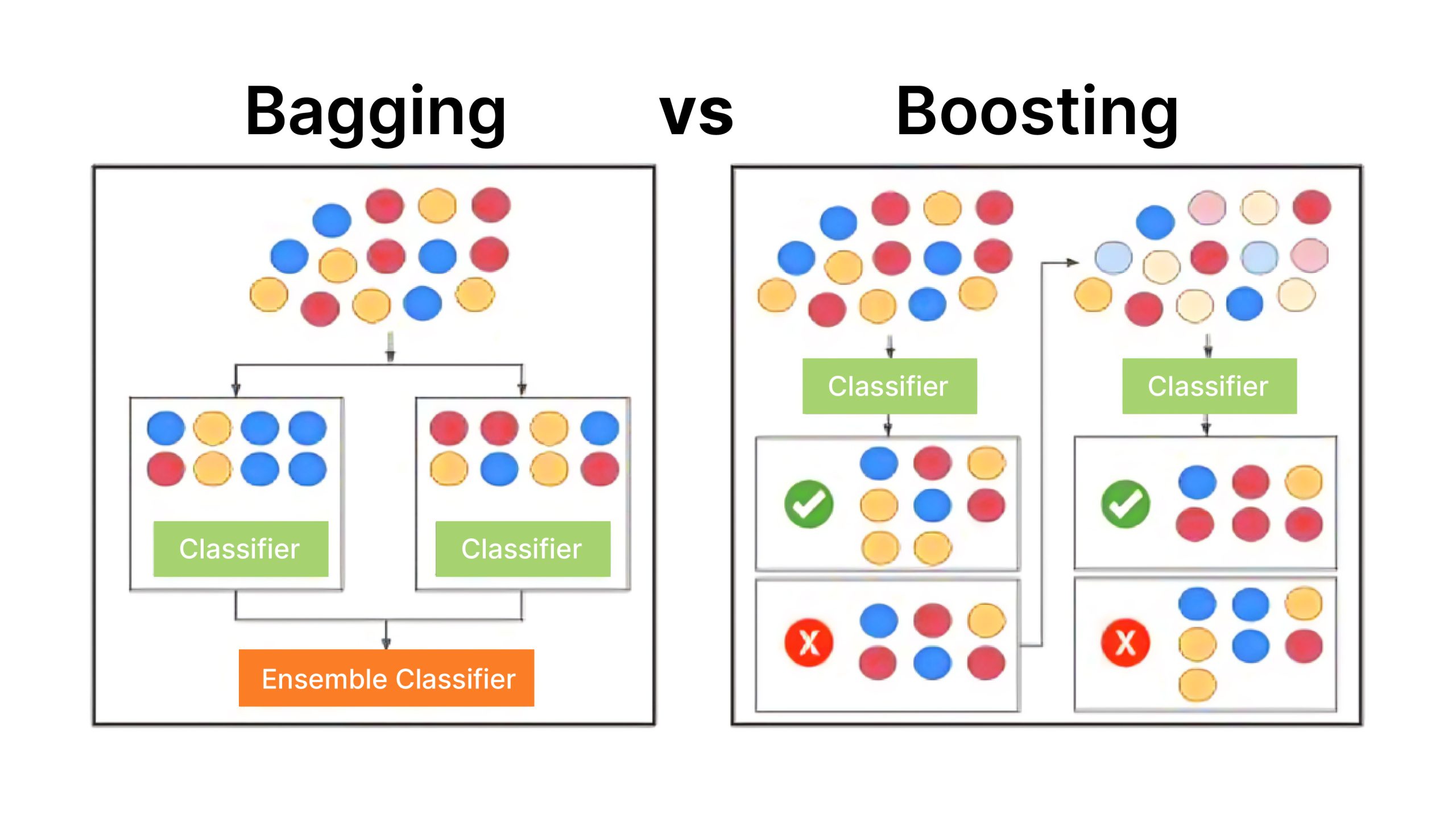

Variations Between Bagging and Boosting

Allow us to now discover distinction between bagging and boosting.

| Function | Bagging | Boosting |

| Sort of Ensemble | Parallel ensemble methodology | Sequential ensemble methodology |

| Base Learners | Skilled in parallel on totally different subsets of the information | Skilled sequentially, correcting earlier errors |

| Weighting of Knowledge | All information factors equally weighted | Misclassified factors given extra weight |

| Discount of Bias/Variance | Primarily reduces variance | Primarily reduces bias |

| Dealing with of Outliers | Resilient to outliers | Extra delicate to outliers |

| Robustness | Usually sturdy | Much less sturdy to outliers |

| Mannequin Coaching Time | Could be parallelized | Usually slower as a result of sequential coaching |

| Examples | Random Forest | AdaBoost, Gradient Boosting, XGBoost |

Conclusion

Bagging is a robust but easy ensemble methodology that strengthens mannequin efficiency by reducing variation, enhancing generalization, and growing resilience. Its ease of use and skill to coach fashions in parallel make it in style throughout numerous functions.

Ceaselessly Requested Questions

A. Bagging in machine studying reduces variance by introducing variety among the many base fashions. Every mannequin is educated on a special subset of the information, and when their predictions are mixed, errors are likely to cancel out. This results in extra steady and dependable predictions.

A. Bagging may be computationally intensive as a result of it includes coaching a number of fashions. Nevertheless, the coaching of particular person fashions may be parallelized, which might mitigate a few of the computational prices.

A. Bagging and Boosting are each ensemble strategies however makes use of totally different method. Bagging trains base fashions in parallel on totally different information subsets and combines their predictions to cut back variance. Boosting trains base fashions sequentially, with every mannequin specializing in correcting the errors of its predecessors, aiming to cut back bias.